What is Gamma Correction in Images and Video?

Each pixel in a image has brightness level, called luminance. This value is between 0 to 1, where 0 means complete darkness (black), and 1 is brightest (white). [see CSS: HSL Color]

Different camera or video recorder devices do not correctly capture luminance. (they are not linear) Different display devices (monitor, phone screen, TV) do not display luminance correctly neither. So, one needs to correct them, therefore the gamma correction function.

Gamma correction function is used to correct image's luminance. Like this:

output_luminance = gammaCorrectionFunction[input_luminance]

The luminance is a value between 0 to 1.

Gamma correction function is a function that maps luminance levels to compensate the non-linear luminance effect of display devices (or sync it to human perceptive bias on brightness).

“Gamma correction function” is defined by:

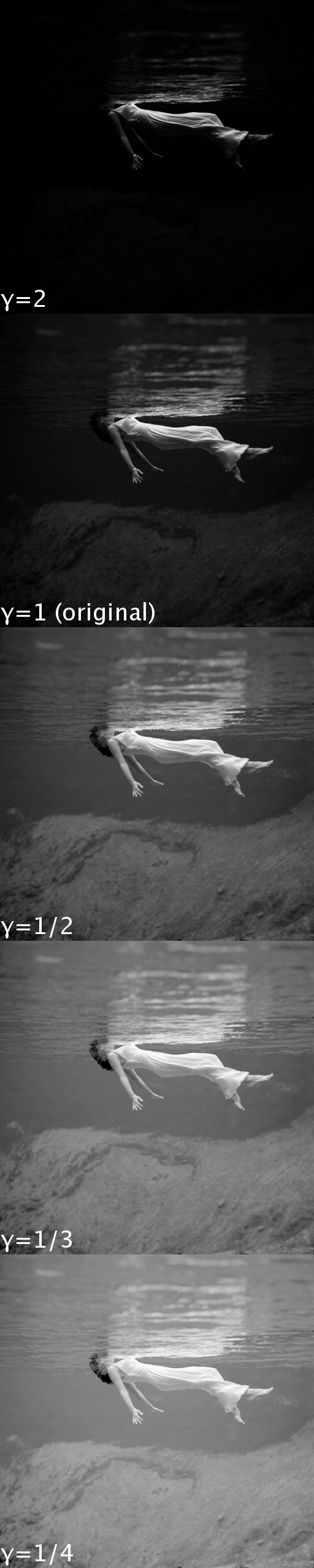

gammaCorrectionFunction[x] := x^γ

where γ is a constant, and “^” is the power operator. The value γ is said to be the gamma.

The luminance generated by a physical device is generally not a linear function of the applied signal. A conventional CRT has a power-law response to voltage: luminance produced at the face of the display is approximately proportional to the applied voltage raised to the 2.5 power. The numerical value of the exponent of this power function is colloquially known as gamma. This nonlinearity must be compensated in order to achieve correct reproduction of luminance.

- Gamma FAQ - Frequently Asked Questions about Gamma

- By Charles Poynton.

- http://www.poynton.com/notes/colour_and_gamma/GammaFAQ.html

What is Luminance?

A image is made up by a rectangular grid of pixels, and a pixel has 3 sets of values, for Red, Green, Blue, together they specify a color for the pixel, and all the pixels form the image. Each pixel has a brightness level, which is the average of {red, green, blue} values, and this is called its luminance.

note also: another value for pixel, is transparency level. This is called “alpha” or “alpha channel” in image/video jargon. Transparency is important for images on computers, because for example, you might want a image partially transparent so users can see what's behind.

The whole thing about the gamma complexity, is of 2 main causes:

- (1) Human perception of the intensities of color or brightness, does not have a linear relationship, with respect to the values of intensity of light waves in physics. That is, we are more sensitive to some color or brightness range.

- (2) The brightness of image display devices, such as CRT, does not have a linear relation of its voltage input.

These are the main reasons. The real complexity is a lot more than that, involving human understanding of nature (physics of color), psychology of perception, technology and engineering limitations (camera and display devices, image and video formats). Starting from the moment you create a digital image either by camera or camcorder, which needs to capture light intensities, thru lens, onto some light-sensitive media (CCD, Photographic film), which eventually needs to be converted to some file format when stored. And when you display it, the software needs to read the data, interpret it appropriately, and eventually convert the bits to voltage in your screen.

Video and image formats such as {mpeg, NTSC, jpeg, png}, actually store the adjusted light intensities (gamma corrected), and not the unprocessed light intensities.

The reason also seems reasonable. For example, for TV, it is much economic to do the gamma correction processing once and send the gamma corrected signals, than having every TV receiver do the gamma correction.

Reference

- Gamma FAQ - Frequently Asked Questions about Gamma

- By Charles Poynton.

- http://www.poynton.com/notes/colour_and_gamma/GammaFAQ.html

- 〔PNG (Portable Network Graphics) Specification, Version 1.2, section “13. Appendix: Gamma Tutorial”, at http://www.libpng.org/pub/png/spec/1.2/PNG-GammaAppendix.html〕

- Gamma correction

Gamma Error in Picture Scaling

2012-03-12 What made me look into gamma today is when reading this article:

- Gamma error in picture scaling

- By Eric Brasseur.

- http://www.ericbrasseur.org/gamma.html

The article details a defect that almost all image processing software have. When you scale some images, the algorithm used in these software has a defect, so the result scaled image is not optimal. The site gives one particular example input image. When you scale the image, the result is very bad. I tested it with ImageMagick, and verified that it also have this defect.