Learning Notes Of Symmetric Space and Differential Geometry Topics

Spent a hour chatting with Richard Palais on voice yesterday. He is teaching me some math about transvections. This page is some learning notes on some differential geometry related topics spurred from the chat.

Spent about 6 hours reading Wikipedia and writing this.

Here is Wikipedia article Symmetric space, some quotes:

Symmetric Space

In differential geometry, representation theory and harmonic analysis, a symmetric space is a smooth manifold whose group of symmetries contains an "inversion symmetry" about every point. There are two ways to make this precise, via Riemannian geometry or via Lie theory; the Lie theoretic definition is more general and more algebraic.

In Riemannian geometry, the inversions are geodesic symmetries, and these are required to be isometries, leading to the notion of a Riemannian symmetric space…

Here is a brief explanation, assuming the manifold is 2 dimensional. A symmetric space means it is a smooth surface such that every point on the surface can serve as a point for reflection thru a point, such that any shortest distance from two points on the surface, is still the same, before and after the reflection.

Think of a 2-dimensional, euclidean plane. There, we have the concept of reflection thru a point. After the reflection, all distances are preserved. So, it is a symmetric space. The case gets a bit more complex when the surface is not a flat plane. Say, a sphere. A sphere is also a symmetric space. Every point on the sphere can serve as the point for reflection thru a point. After the operation, every geodesics is preserved. That is, any 2 points, P, and G, the shortest distance between them on the surface, is the same, before and after, the operation.

So, what possibly could other symmetric space be for surfaces, besides a plane or sphere? One example i'm given is a flat torus. (See: Flat manifold)

Richard particularly spoke about Elie Cartan, the man behind symmetric spaces. Richard said when he is studying in France, he read Elie's work and found it beautiful.

Flat manifold

In mathematics, a Riemannian manifold is said to be flat if its curvature is everywhere zero. Intuitively, a flat manifold is one that "locally looks like" Euclidean space in terms of distances and angles, for example, the interior angles of a triangle add up to 180°.

The universal cover of a complete flat manifold is Euclidean space. This can be used to prove the theorem of Bieberbach (1911, 1912) that all compact flat manifolds are finitely covered by tori; the 3-dimensional case was proved earlier by Schoenflies (1891).

Geodesics

Geodesic .

In mathematics, a geodesic (pronounced /ˌdʒiː.ɵˈdiːzɨk, ˌdʒiː.ɵˈdɛsɨk/) is a generalization of the notion of a "straight line" to "curved spaces". In the presence of a metric, geodesics are defined to be (locally) the shortest path between points on the space. In the presence of an affine connection, geodesics are defined to be curves whose tangent vectors remain parallel if they are transported along it.

The term "geodesic" comes from geodesy, the science of measuring the size and shape of Earth; in the original sense, a geodesic was the shortest route between two points on the Earth's surface, namely, a segment of a great circle. The term has been generalized to include measurements in much more general mathematical spaces; for example, in graph theory, one might consider a geodesic between two vertices/nodes of a graph.

Representation theory

Representation theory is a branch of mathematics that studies abstract algebraic structures by representing their elements as linear transformations of vector spaces.[1] In essence, a representation makes an abstract algebraic object more concrete by describing its elements by matrices and the algebraic operations in terms of matrix addition and matrix multiplication. The algebraic objects amenable to such a description include groups, associative algebras and Lie algebras. The most prominent of these (and historically the first) is the representation theory of groups, in which elements of a group are represented by invertible matrices in such a way that the group operation is matrix multiplication.[2]

Hilbert space

The mathematical concept of a Hilbert space, named after David Hilbert, generalizes the notion of Euclidean space. It extends the methods of vector algebra and calculus from the two-dimensional Euclidean plane and three-dimensional space to spaces with any finite or infinite number of dimensions. A Hilbert space is an abstract vector space possessing the structure of an inner product that allows length and angle to be measured. Hilbert spaces are in addition required to be complete, a property that stipulates the existence of enough limits in the space to allow the techniques of calculus to be used.

Complete metric space

What does the “complete” above mean? Here: Complete metric space .

In mathematical analysis, a metric space M is said to be complete (or Cauchy) if every Cauchy sequence of points in M has a limit that is also in M or alternatively if every Cauchy sequence in M converges in M.

Intuitively, a space is complete if there are no "points missing" from it (inside or at the boundary). Thus, a complete metric space is analogous to a closed set. For instance, the set of rational numbers is not complete, because is "missing" from it, even though one can construct a Cauchy sequence of rational numbers that converges to it. (See the examples below.) It is always possible to "fill all the holes", leading to the completion of a given space, as will be explained below.

Definition

A Hilbert space H is a real or complex inner product space that is also a complete metric space with respect to the distance function induced by the inner product.

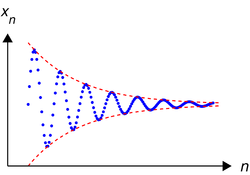

Cauchy sequence

What the faak is Cauchy sequence?

In mathematics, a Cauchy sequence, named after Augustin Cauchy, is a sequence whose elements become arbitrarily close to each other as the sequence progresses. To be more precise, by dropping enough (but still only a finite number of) terms from the start of the sequence, it is possible to make the maximum of the distances from any of the remaining elements to any other such element smaller than any preassigned, necessarily positive, value.

Inner product space

In mathematics, an inner product space is a vector space with the additional structure called an inner product. This additional structure associates each pair of vectors in the space with a scalar quantity known as the inner product of the vectors. Inner products allow the rigorous introduction of intuitive geometrical notions such as the length of a vector or the angle between two vectors. They also provide the means of defining orthogonality between vectors (zero inner product). Inner product spaces generalize Euclidean spaces (in which the inner product is the dot product, also known as the scalar product) to vector spaces of any (possibly infinite) dimension, and are studied in functional analysis.

An inner product space is sometimes also called a pre-Hilbert space, since its completion with respect to the metric, induced by its inner product, is a Hilbert space. That is, if a pre-Hilbert space is complete with respect to the metric arising from its inner product (and norm), then it is called a Hilbert space.

Lie Group

Lie group is a group which is also a differentiable manifold, with the property that the group operations are compatible with the smooth structure. Lie groups are named after the nineteenth century Norwegian mathematician Sophus Lie, who laid the foundations of the theory of continuous transformation groups. Lie groups represent the best-developed theory of continuous symmetry of mathematical objects and structures, which makes them indispensable tools for many parts of contemporary mathematics, as well as for modern theoretical physics. They provide a natural framework for analysing the continuous symmetries of differential equations (Differential Galois theory), in much the same way as permutation groups are used in Galois theory for analysing the discrete symmetries of algebraic equations. An extension of Galois theory to the case of continuous symmetry groups was one of Lie's principal motivations.

Differential Galois Theory

In mathematics, the antiderivatives of certain elementary functions cannot themselves be expressed as elementary functions. A standard example of such a function is e−x2, whose antiderivative is (up to constants) the error function, familiar from statistics. Other examples include the functions Sin[x]/x and x^x.

It should be realized that the notion of a elementary function is merely a matter of convention. One could choose to add the error function to the list of elementary functions, and with this new list, the antiderivative of e−x2 is elementary. However, no matter how long the list of so called elementary functions, as long as it is finite, there will still be functions on the list whose antiderivatives are not.

The machinery of differential Galois theory allows one to determine when a elementary function does or does not have an antiderivative that can be expressed as a elementary function. Differential Galois theory is a theory based on the model of Galois theory. Whereas algebraic Galois theory studies extensions of algebraic fields, differential Galois theory studies extensions of differential fields, i.e. fields that are equipped with a derivation, D. Much of the theory of differential Galois theory is parallel to algebraic Galois theory. One difference between the two constructions is that the Galois groups in differential Galois theory tend to be matrix Lie groups, as compared with the finite groups often encountered in algebraic Galois theory. The problem of finding which integrals of elementary functions can be expressed with other elementary functions is analogous to the problem of solutions of polynomial equations by radicals in algebraic Galois theory.

Topological space

Topological spaces are mathematical structures that allow the formal definition of concepts such as convergence, connectedness, and continuity. They appear in virtually every branch of modern mathematics and are a central unifying notion.

A topological space is a set X together with τ, a collection of subsets of X, satisfying the following axioms:

- The empty set and X are in τ.

- The union of any collection of sets in τ is also in τ.

- The intersection of any finite collection of sets in τ is also in τ.

Space

This article: Space (mathematics), is quite illuminating.

The article is particular interesting because it gives a sense of organization of all these spaces.

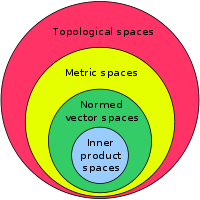

Basically, the most basic is a inner product space (which is a vector space with inner product defined). The inner product is required, a modern approach, to give you definition of angles. Then, you have normed vector spaces. That means, the length concept is defined based on inner product. (again, not necessarily natural, but kinda logically the simplest from modern foundational point of view) So now, you have angles and length, both defined on top of Field. A normed vector space basically gives you the familiar euclidean space. More generalization with different ways to measure the “distance” between elements gives you metric spaces. And more abstract, rigorous, or different, definitions on issues of boundary, continuity, differentiability, gives you topological spaces.

Note, that the above lose grouping is just one perspective. Categorization or classification is Taxonomy and Ontology. Basically, there are many perspectives, with regards to different needs. There there's not one categorization that can be considered The Correct Way, because, a categorization system implicitly defines a goal or philosophy, and the goal/needs/philosophy are different.

In particular, the above hierarchy of spaces is based on most popular modern practices of math foundation. That is, you start with sets, then integers, then rational numbers, then with Dedekind cut you have real numbers, then with binary operators (functions of 2 parameters) you have groups, then rings and fields, and vector space. Though, at this point you don't have the familiar angle and distance of euclidean space. So, comes a fiat definition of a function called inner product, that gives you definition of angle, then, length comes from another function called “norm”, conveniently defined on top of inner product. Area and volume's definition and generalization became Measure spaces .

Some items of particular personal interest:

The original space investigated by Euclid is now called "the three-dimensional Euclidean space". Its axiomatization, started by Euclid 23 centuries ago, was finalized in the 20 century by David Hilbert, Alfred Tarski and George Birkhoff. This approach describes the space via undefined primitives (such as "point", "between", "congruent") constrained by a number of axioms. Such a definition "from scratch" is now of little use, since it hides the standing of this space among other spaces. The modern approach defines the three-dimensional Euclidean space more algebraically, via linear spaces and quadratic forms, namely, as an affine space whose difference space is a three-dimensional inner product space.

Also a three-dimensional projective space is now defined non-classically, as the space of all one-dimensional subspaces (that is, straight lines through the origin) of a four-dimensional linear space.