Guy Steele on Parallel Programing. (Get rid of lisp cons) 2011

Tips on Writing Parallel Programs: Don't Iterate, Recurse, Fold, Pipe, Foreach, Get Rid of Linked List

The followings are items from Guy Steele's slide.

Things to Avoid

- DO loops.

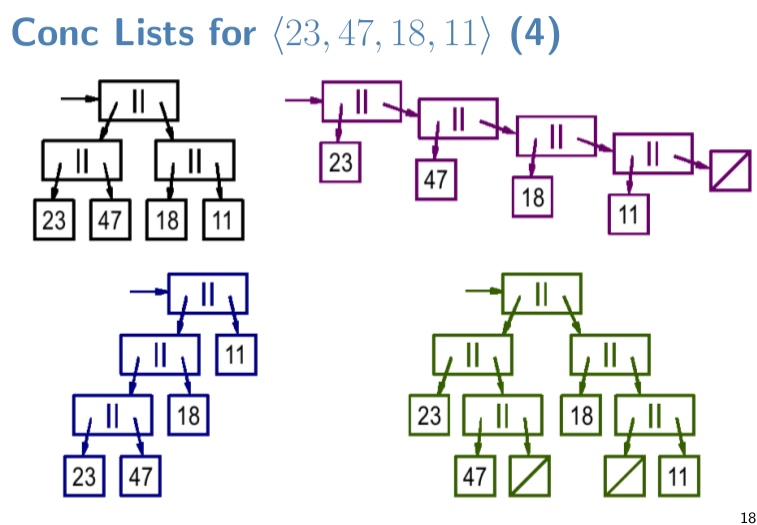

- linear linked list. (lisp's

cons) - Java style iterator.

- even arrays are suspect.

- as soon as you say “first, SUM = 0” you are hosed.

- accumulators are BAD. They encourage sequential dependence and tempt you to use non-associative updates.

- If you say, “process sub-problems in order,” you lose.

- The great tricks of the sequential past WON'T WORK.

- The programing idioms that have become second nature to us as everyday tools for the last 50 years WON'T WORK.

Conclusion

- A program organized according to linear problem decomposition principles can be really hard to parallelize. (e.g. accumulator, do loop)

- A program organized according to independence and divide-and-conquer principles is easy to run in parallel or sequentially, according to available resources.

- The new strategy has costs and overheads. They will be reduced over time but will not disappear.

- in a world of parallel computers of wildly varying sizes, this is our only hope for program portability in the future.

- Better language design can encourage “independent thinking” that allows parallel computers to run programs effectively.

Elegant Functional Style, Recursion, Fold, Piping, is Bad for Parallelism

I've been doing functional programing for since 1993, pretty much ever since i started programing with my first language Mathematica. Two paradigms i use frequently, is sequencing functions (aka chaining functions, piping) and recursion (e.g. fold, nest). In some sense, most of my programs is one single sequence of function chain. The input is feed to a function, then its output to another, then another, until the last function spits out the desired output. It is extremely elegant, no state whatsoever, and often i even take the extra mile to avoid using any local temp variables or constants in my functions. But after watching this video, i learned, that function chaining is NOT good for parallelism, despite it is considered a very elegant functional paradigm.

As a functional programer, typically we are familiar with all the FP style constructs, and we strive for such elegance. The important thing i learned from this video is that, elegance in functional style does not mean it will be good for parallelism. Similarly, software written with purely functional language such as Haskell, does not imply it'll be good for parallelism. (but will be a lot better than procedural langs, of course.) FP and Automatic Parallelism certainly share some aspects, such as declarative code and not maintaining states, but is not the same issue.

Guy Steele's Talk: How to Think About Parallel Programming: Not!

A fascinating talk by the computer scientist Guy Steele. (well known as one of the author of Scheme Lisp, and emacs (before Richard Stallman))

- How to Think about Parallel Programming: Not!

- By Guy Steele.

- http://www.infoq.com/presentations/Thinking-Parallel-Programming

The talk is a bit long, at 70 minutes. The first 26 minutes he goes thru 2 computer programs written for 1970s machines. It's quite interesting to see how software on punch card works. For most of us, we've never seen a punch card. He goes thru it “line by line”, actually “hole by hole”. Watching it, it gives you a sense of how computers are like in the 1970s.

At 00:27, he starts talking about “automating resource management”, and quickly to the main point of his talk, about what sort of programing paradigms are good for parallel programing. Here, parallel programing means solving a problem by utilizing multiple CPUs. This is important today, because CPU don't get much faster anymore; instead, each computer is getting more CPU (multi-core).

In the rest 40 min of the talk, he steps thru 2 programs that solve a simple problem of splitting a sentence into words. First program is typical procedural style, using do-loop (accumulator). The second program is written in his language Fortress, using functional style. He then summarizes a few key problems with traditional programing patterns, and itemize a few functional programing patterns that he thinks is critical for programing languages to automate parallel computing.

In summary, as a premise, he believes that programers should not worry about parallelism at all, but the programing language should automatically do it. Then, he illustrates that there are few programing patterns that we must stop using, because if you write your code in such paradigm, then it would be very hard to parallelize the code, either manually or by machine AI.

If you are a functional programer and read FP news in the last couple of years, his talk doesn't seem much new. However, i find it very interesting, because:

- (1) This is the first time i see Guy Steele talk. He talks slightly fast. Very bright guy.

- (2) The detailed discussion of punch card code on 1970s machine is quite a eye-opener for those of us who haven't been there.

- (3) You get to see Fortress code, and its use of fancy Unicode characters.

- (4) Thru the latter half of talk, you get a concrete sense of some critical “do's and don'ts” in coding paradigms about what makes automated parallel programing possible or impossible.

In much of 2000s, i did not understand why compilers couldn't just automatically do parallelism. My thought have always been that a piece of code is just a concrete specification of algorithm and machine hardware is irrelevant. But i realized, that any concrete specification of algorithm is specific to a particular hardware, by nature. [see Why Must Software be Rewritten for Multi-Core Processors?]

Parallel Computing vs Concurrency Problems

Note that parallel programing and concurrency problem are related but not the same thing.

- Parallel computing is about writing code that can use multiple CPUs.

- Concurrency problems is about problems of concurrent computations using the same resource or time (typical issues: race condition, file locking. Two persons editing the same file. Doing backup while editing a file.).

Fortress and Unicode

Parallel Programing Exercise

Here is a interesting Parallel Programing Exercise: Code Fun: ASCIIFY String Parallel Algo .

Organizing Functional Code for Parallel Execution (or, foldl and foldr Considered Slightly Harmful)

Here is a paper written by Guy Steele, on the same topic, in 2009, but with lots lisp and Fortress code.

At the end of the document, he says, in big red letters: “Get rid of cons!”.

Lisp Problems

- Concepts and Confusions of Prefix, Infix, Postfix and Lisp Notations (2006)

- Fundamental Problems of Lisp, Syntax Irregularity (2008)

- Fundamental Problems of LISP, the Cons Cell (2008)

- Famous Programers on How Common Lisp Sucks (2012)

- Guy Steele on Parallel Programing. (Get rid of lisp cons) 2011

- LISP Infix Syntax Survey (2013)

- comp lang: LISP Syntax Problem of Chaining Functions

Great articles on programing languages

- History of Object Oriented Programing, by Casey Muratori (2025)

- Guy Steele on Parallel Programing. (Get rid of lisp cons) 2011

- Rust in Perspective. Programing Language History. By Linus Walleij (2022)

- A Brief History of Software Engineering, by Niklaus Wirth (2008)

- Python vs OCaml (2014)

- What Happens When You Replace C by Go in Linux (2024)

- C Is Not a Low-level Language, by David Chisnall 2018