Math Models of 3D Input Control

In working with many 3D applications in the past years, i've become interested in how all the different needs for 3D viewing or navigation can be based on a simple mathematical model.

Example of Different 3D Navigation Needs

Here are examples of different needs for 3D control.

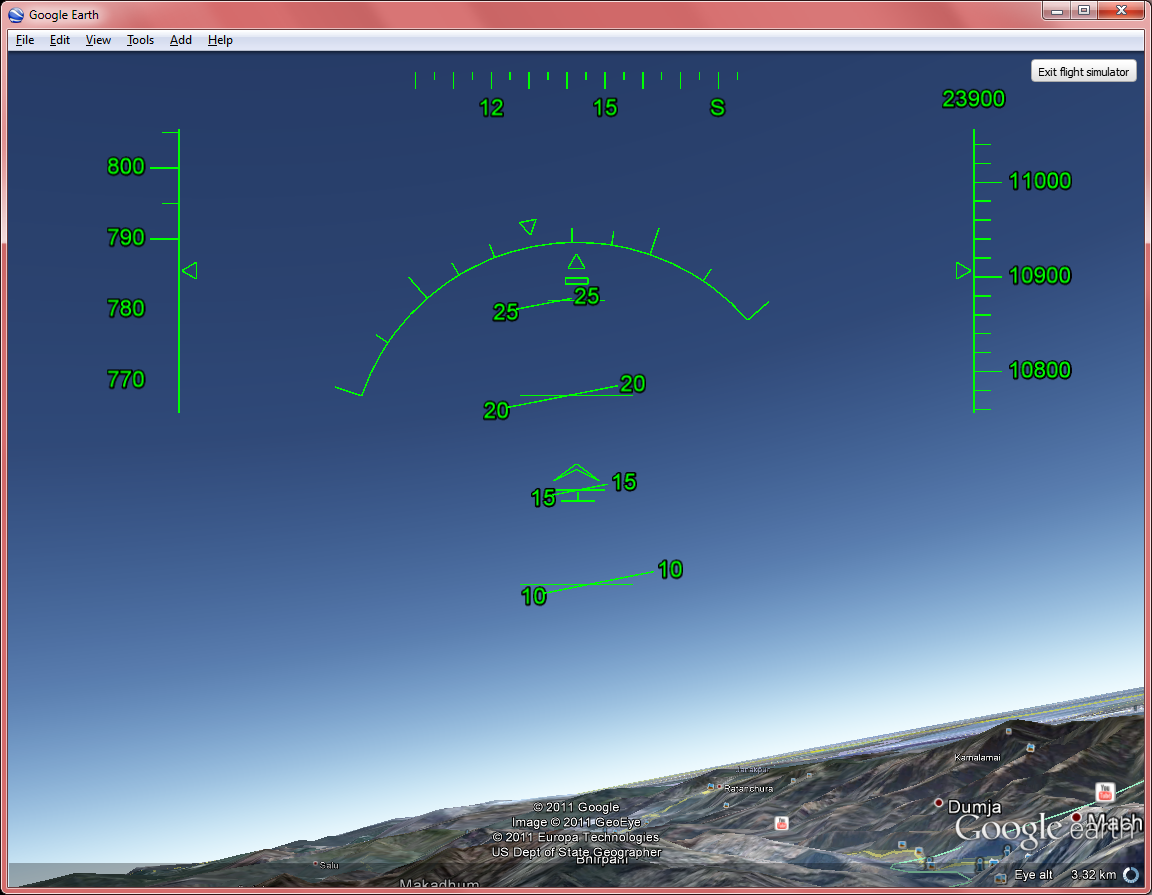

- In flight simulation, you need to control {yaw, pitch, roll}, and forward speed.

- In 3D Modeling Software, you want to focus on your object and be able to rotate the object, or zoom in/out. Example: 360° viewing a car.

- In Panorama applications (360° photos), you want to rotate yourself, and also be able to zoom in out. (used for touring a interesting place, a building, exploration, a cliff, bridge, etc.)

- In 3D games, you walk a character. This is moving forward/backward, and turning (self-rotation), but also move left/right (side-step/strife). Also, usually there's a view mode, so that your input device now controls the orientation of your head, similar to panorama applications.

- In modern map applications, such as Google Earth, you have to move the map, and also viewing buildings from different angles, and also navigate like flying a plane.

- In more complex virtual world software, such as Second Life, you need to have diverse controls of all aspects of 3D world, including all of the above.

Mathematically, all of the above can be considered as changing the position and orientation of a camera.

- Panorama view = Rotate camera on its center.

- Zoom = move the camera on the line-of-sight axes. (move closer/further from the object)

- Pan = Move the camera in a plane that's perpendicular to the line-of-sight while keeping the camera's orientation fixed.

- Tilting a object = move camera in a circle around a object while keep pointing at it.

- Rotation of a object = move camera on a sphere around a object while keep pointing at it.

- Walk-thru = change the position and or orientation, while camera position is constraint to move in a plane parallel to ground.

- Fly-thru = change the position and or orientation of the camera in 3D space freely.

- Yaw = Rotate camera on its vertical axes. (turn left/right)

- Pitch = Rotate camera on its horizontal axes. (tilt up/down)

- Roll = Rotate camera on the axes of line-of-sight. (For example, turn camera sideways or upside down)

Examples of Apps with Different Needs of 3D Control

Models for Simple Rotation Of a Object with Mouse

Different software have different camera control models. Simple math surface plotting software, typically lets you just rotate the object, typically by dragging your mouse over the object. How dragging changes the object's orientation, in fact differs among applications.

Control by Mapping Drag Vector to X and Y Parameters

Here's the most popular method of how the mouse drag is mapped to object rotation.

When you drag a mouse, you start by a point on the screen when you hold down the button, call this point P, and let Q be your your current mouse position on the screen. The 2 points PQ forms a vector. That is, a direction and a distance. The direction and distance is a 2D vector, say, “(a,b)”. The first component, “a”, is mapped to the rotation of the vertical axis (z-axis) of the 3D model. The second component, “b”, is mapped to one of the horizontal axis, x-axis, or y-axis, depending on how your axes is oriented.

This is the most intuitive way of mapping mouse drag to 3D rotation. It is important to note that where you start the drag does not matter. As long the drag distance and direction is the same, the result rotation is same.

Apps that use this model of drag for rotation include:

- K3DSurf http://k3dsurf.sourceforge.net/

- JavaView http://www.javaview.de/

- LiveGraphics3D http://www.vis.uni-stuttgart.de/~kraus/LiveGraphics3D/

- NuCalc http://www.nucalc.com/

Control by Also Considering Start Drag Position

Another way i've seen is that they take the distance dragged and the direction of the drag as a vector, but also taking the start position of the drag. One example that does this is 3D-Xplormath (http://3d-xplormath.org/).

For a Java Applet example of this method of mapping mouse drag to rotate 3D model, see: http://3d-xplormath.org/j/applets/en/vmm-surface-parametric-Cyclide.html .

I find this way not intuitive. It has the advantage of able to get to the orientation you want with less operation, with the dis-advantage that you must be more careful where you start to drag.

Flight Input Controls

Flight simulator or apps that involves flying or walk-thru, has rather simple input control needs. They are just Yaw, pitch, roll, and a input control for thrust/speed.

(yaw is like turning left/right in driving, pitch is tilting up and down, roll is tilting your wings. So, yaw = z-axis, pitch = x-axis, roll = y-axis.)

For helicopters, besides yaw, pitch, roll, you also have moving up/down.

2D Walk-thru

In many games, the player walks a avatar in 3d space. In this case, you don't have pitch and roll as in flights. But, you have forward, backward, move left, move right. Typically you also have turn left and turn right.

Mathematically, you have yaw (for turning left/right (as part of changing orientation)), and position change in the x and y axes.

In such games, they also allow you to switch to a camera mode. That means, your input devices corresponds to moving your head around to look at surroundings. Mathematically, this is yaw, pitch, roll.

Typically, they have 2 modes for your input device and what you seen on the screen: First person view, and 3rd person view. In first person view, your screen displays what the camera displays. In 3rd person view, the camera is positioned slight behind and above your avatar, and oriented to face forward, the effect is that you can see yourself walking as you control it.

When switching between First Person View and Third Person View, typically, the way your keyboard or mouse inputs corresponds to avatar control changes, or can be set differently.

3D Modelers, Virtual Reality, and Others

3D Modelers are in fact different depending on their use, for example, for illustration, for animation (such as in movies), for engineering, such as architecture and prototyping cars or other devices. Their features and modeling methods differs. Likewise, their need for camera navigation also differs.

See also: List of 3D Modeling Software .

In 3D modeling software, typically they have features of fly-thru, walk-thru, and more flexible ways to look at a object from different angles.

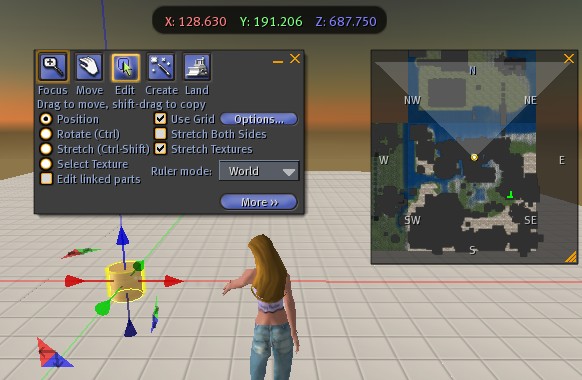

Another example that needs 3D input control in many aspects, is the virtual world Second Life .

Second Life in pretty much like a 3D game, where you can walk, fly, in first person view or 3rd person view modes. But also, it provides a environment that people can build houses, and things in-world, in a sense, it is also a modeling application. So, it needs all modes of input controls for fly-thru, walk-thru, as well as flexible ways of viewing objects that users can easily change camera angle, zoom in/out, rotate the camera around the object, pan the screen.

General Model For 3D Input Control

One way to cover all these different needs is just to consider a camera, its orientation, and its position.

(In addition, typically you also want a concept of focus point, where the camera's position changes by revolving around a point (for viewing the object from different angles; or rotating a object), and for panning the screen (which is moving the camera in a plane perpendicular to the line from camera to the object (focus point).))

So, to design a good UI for all the 3D needs, one must have a way to easily change the camera's orientation, and also its position, in real time, and simultaneously.

For camera orientation, there are 3 elements: yaw, pitch, roll. For position, there's x, y, z axes. These serve the basis where all other changes can be specified.

Characteristics of Input Devices

Simultaneous Change Of Inputs

A mouse provides the change of a pointer in a plane. Same for a Trackball. A plane can be based on a basis of 2 coordinates, x and y. There are 2 particular interesting aspects of the mouse. One of them is: The change of 2 coordinates can be simultaneous. That is, you don't just change the x and y one after the other. When you roll the mouse, both of them changes.

Continuous Variable Rate of Change

The other interesting aspect about mouse is that the rate of change is variable in a controlled way. That is, you can naturally and precisely control the speed of the pointer in real time.

These 2 aspects are important for input. Other similar pointing devices, such as Pointing stick on IBM ThinkPad keyboards, do not provide variable speed input in a physical way. (that is, the devices themselves do not, but there are ways to add that thru software, such as by measuring the degree of pressure in the pointing stick.)

Also, consider touchpad or touchscreen [see Microsoft Surface Pro 4, 2015] , each have different controllability characteristics. Both provides controlled variable rate of change, but however, the quality is different. For example, in a touchscreen, if you have to constantly drag your finger across, that gets tired quickly and too much rub on the finger.

Also note that touchpad or touchscreen provides another characteristics not in a mouse. That is, instantaneous jump to arbitrary coordinates in a controlled way. However, it also has a disadvantage when compared to trackball, that is, it needs to be repositioned, same as mouse, for long movements.

…

There are many other characteristics of input devices. Drawing Tablet, Jog/Shuttle wheel (used in synth keyboards), rotating knob (typically for volume control), wheel in larger form as driving wheel.

see:

3D Input Devices

3D input devices for modeling and gaming, need to map to yaw, pitch, roll, and the 3 axes x, y, z. So, ideally, the device should allow human animals, to simultaneous change all of 6 parameters, in a natural way, and directly for each parameter. For mouse, trackball, touchpad, that's 2 only. Without going to gloves or some type of motion detection devices, the best we can hope for a table-top device is probably simultaneous change of 3 parameters. For example, SpaceNavigator does that. In fact, by the limitation of human anatomy and brain ability, the max number of simultaneous parameter change for humans to intuitively use is probably just 3, in general. For example, suppose you can change a camera's orientation and position just by thinking about it, and imagine you are flying such a camera is space, in a game such that requires you to go thru a particular tube with particular change of orientation spec. You will probably fail miserably, even with years of training.

Ok, so if we limit the change of parameters to just 3, now we can think about other important characteristics. One that's most important is probably the continuous variable rate of change.

It is hard to imagine a design for a table-top device that provides continuous variable rate of change in 3 parameters. Sliders and rotary knobs provides 1. Trackball and mouse provides 2. The SpaceNavigator 3D controller, does not physically provide the ability of continuous variable rate of change for any parameter. (it does only thru software)

Given that we are limited to 3 parameters, and just 2 parameters for continuous variable rate of change, so in order to think of a optimal design of input device for 3D apps, we probably need to resort design that use elements of different type of control for the hand (For example, joystick, wheel, ball), and provides buttons for change of modes, and or use software mapping for the continuous variable rate change. For example, modern joystick that are modeled after jet's are quite versatile. Typically it provides a joystick, with a lot buttons (as triggers) and knobs, and sometimes another joystick for the thumb. (Note: modern joysticks also measures disposition in a continuous way, called Analog stick)

[see Gamepads for Computer]

By limiting to devices of single element, trackball is probably the most versatile input device for continuous variable rate of change.

So, i think a ideal device design for 3D apps, is a flight simulator type joystick, possibly in combination with a built-in trackball.

SpaceNavigator 3D Mouse

SpaceNavigator 3D Mouse