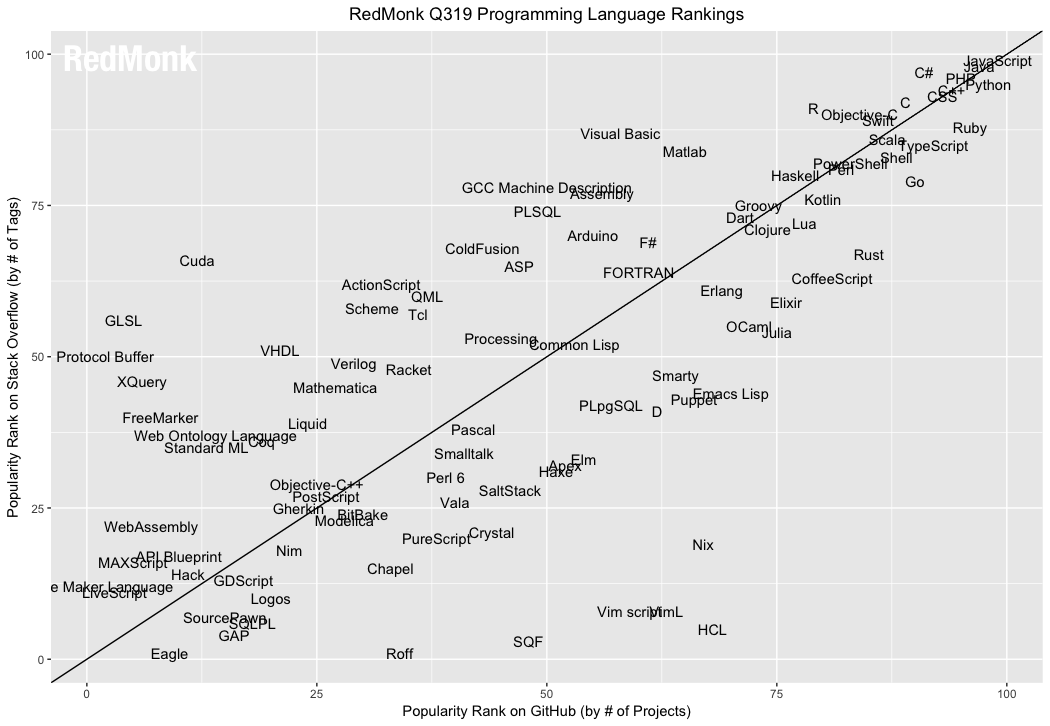

Proliferation of Programing Languages

There is a proliferation of computer languages today like never before. In this page, i list some of them.

In the following, i try to list some of the languagess that are created after 2000, or become very active after 2000.

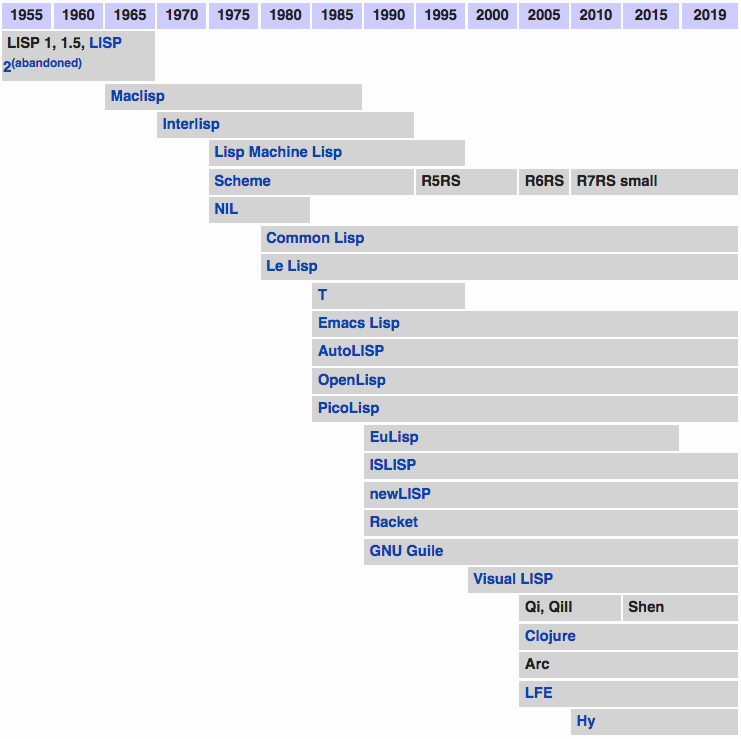

Lisp History Timeline

Mathematica (1988)

- Mathematica, by Stephen Wolfram.

- Originally, a computer algebra system, now a generic language for technical computation.

- For example, image processing, sound/signal analysis, artificial intelligence research, etc.

- Used mostly in science and engineering community.

newLISP (1991)

newLISP, 1991, by Lutz Mueller. Lisp scripting style. Verdant community of new generation of hobbyist programers.

Arc Lisp (2008)

- Arc. By Paul Graham and Robert Morris.

- Based on Common Lisp.

- Paul thinks we need a language for “hackers”.

- The language design focus on “explorative programing”.

Qi lisp (~2005)

Qi, a modern lisp. By recluse Mark Tarver. It has a optional type system based on sequent calculus.

Shen (2011)

- Successor of Qi lisp.

- https://shenlanguage.org/

Clojure (2007)

- Clojure, by Rich Hickey.

- A new lisp dialect on Java platform.

- Focused on function programing, immutable datatypes, and concurrent programing.

Scheme (1975)

Scheme is designed by Guy L Steele and Gerald Jay Sussman

Made popular in part by the book Structure and Interpretation of Computer Programs

Scheme, notably PLT Scheme (now known as Racket). Used mostly for teaching and language research.

Dylan (1992)

Dylan. Dead. Apple's re-invention of lisp in the 1990s.

ML (Meta Language) Family

OCaml. Almost all current theorem proofing systems are written on.

F Sharp (2005)

FSharp. Designed by Don Syme. Microsoft's functional language, based on OCaml.

Other

Alice. Concurrent, ML derivative. Saarland University, Germany.

ML/OCaml derived Proof systems in industrial use:

- HOL theorem prover family

- HOL Light

- Isabelle

- Coq

Modern Functional Languages

Erlang. Functional, concurrent. Mostly used in a telecommunication industry for concurrency and continuous up-time features.

Haskell → from 1990, classic functional language. Mostly used in academia for teaching and language research.

Concurrent Clean → pure functional programing language, similar to haskell.

Mercury → Logic, functional.

Q → Functional language, based on term rewriting. Replaced by Pure .

Oz → Concurrent. Multi-paradigm. Mostly used in teaching.

Perl derivative

- PHP → Perl derivative for server side web apps. One of the top 5 most popular languages.

- Ruby → Perl with rectified syntax and semantics. Somewhat used in industry. User numbers probably less than 5% of Perl or Python.

- Perl6 → Next generation of perl. In alpha stage.

- Sleep → A scripting language, perl syntax. On Java platform. http://sleep.dashnine.org/

On Java Virtual Machine:

- Scala → A FP+OOP language on Java platform as a Java alternative.

- Groovy → Scripting language on Java platform.

C derivatives:

- ObjectiveC → Strict superset of C. Used as the primary language by Apple for OS X app dev.

- C# → Microsoft's answer to Java. Quickly becoming top 10 language with Microsoft's “.NET” architecture.

- D → Clean up of C++.

- Go → Google's new language as improvement of C.

2D graphics related.

- Scratch → Derived from SmallTalk + Logo. Primarily for teaching children programing.

- ActionScript → Based on JavaScript but for interactive 2D graphics. Quickly becomes top 10 language due to popularity of Adobe Flash.

- Processing → 2D graphics on Java platform. Primarily used for art and teaching.

JavaScript

- JavaScript.

- Mostly for web browser scripting.

- One of the top 10 most popular language.

- Quickly becoming the standard language for general purpose programing language.

- (in 2000s used by Adobe Flash, Dashboard Widgets, scripting Adobe PDF files, scripting Microsoft Windows, scripting Java.

- Since 2010 it's used for smart phone, web frontend, web server, and also desktop apps.)

PowerShell

- PowerShell.

- A modern shell.

- A scripting language designed also for interactive use.

- Syntax similar to Perl and unix shell tools, but based dotnet.

Tcl

Tcl. Scripting, especially for GUI.

Lua

Lua. Scripting, popular as a scripting language in games.

Linden Scripting Language

- Linden Scripting Language.

- Used in virtual world Second Life.

Some Random Thoughts

Following are some random comments on comp languages.

Listing Criterion and Popularity

In the above, i tried to not list implementations. (For example, huge number of Scheme implemented in JVM with fluffs here and there; also, for example: JPython, JRuby, and quite a lot more.) Also, i tried to avoid minor derivatives or variations. Also, i tried to avoid languages that's one-man's fancy with little following.

In the above, i tried to list only “new” languages that are born or seen with high activity or awareness after 2000. But without this criterion, there are quite a few staples that still have significant user base. e.g. APL, Fortran, Cobol, Forth, Logo, Pascal (Ada, Modula, Delphi). And others that are today top 10 most popular languages: C++, Visual Basic.

The user base of the languages differ by some magnitude. Some, such as for example PHP, C#, are within the top 10 most popular language with active users. Some others, are niche but still with sizable user base, such as LSL, Erlang, WolframLang. Others are niche but robust and industrial (counting academia), such as Coq (a proof system), Processing, PLT Scheme, AutoLISP. Few are mostly academic followed with handful of researchers or experimenters, Qi, Arc, Mercury, Q, Concurrent Clean are probably examples.

Why The List

I was prompted to have a scan at these new language because recently i wrote a article titled Fundamental Problems of Lisp, Syntax Irregularity (2008) , which mentioned my impression of a proliferation of languages (and all sorts of computing tools and applications).

10 years ago, in the dot com days (~1998), where Java, JavaScript, Perl are screaming the rounds. It was my opinion, that lisp will inevitably become popular in the future, simply due to its inherent superior design, simplicity, flexibility, power, whatever its existing problems may be. Now i don't think that'll ever happen as is. Because, due to the tremendous technological advances, in particular in communication (i.e. the internet and its consequences, for example, Wikipedia, YouTube, YouPorn, social networks sites, blogs, Instant chat, etc) computer languages are proliferating like never before. (For example, Erlang, OCaml, Haskell, PHP, Ruby, c#, f#, perl6, arc, newLISP, Scala, Groovy, Goo, Nice, E, Q, Qz, Mercury, Scratch, Flash, Processing, …, helped by the abundance of tools, libraries, parsers, existence of infrastructures) New languages, basically will have all the advantages of lisps or lisp's fundamental concepts or principles. I see that, perhaps in the next decade, as communication technologies further hurl us forward, the proliferation of languages will reduce to a trend of consolidation (For example, fueled by virtual machines such as Microsoft's .NET.).

Creating a language is Easy

In general, creating a language is relatively easy to do in comparison to equivalent-sized programing tasks in the industry (such as, for example, writing robust signal processing lib, a web server (For example, video web server), a web app framework, a game engine … etc.). Computing tasks typically have a goal, where all sorts of complexities and nit-gritty detail arise in the coding process. Creating a language often is simply based on a individual's creativity that doesn't have much fixed constraints, much as in painting or sculpting. Many languages that have become popular, in fact arose this way. Popularly known examples includes Perl, Python, Ruby, Perl6, Arc. Creating a language requires the skill of writing a compiler though, which isn't trivial, but today with mega proliferation of tools, even the need for compiler writing skill is reduced. (For example, Arc, various languages on JVM. (10 years ago, writing a parser is mostly not required due to existing tools such as lex/yacc))

Some language are created to solve a immediate problem or need. Mathematica, Adobe Flash's ActionScript, Emacs Lisp, LSL would be good examples. Some are created as computer science research byproducts, usually using or resulting a new computing model. Lisp, Prolog, SmallTalk, Haskell, Qi, Concurrent Clean, are of this type.

Some are created by corporations from scratch for one reasons or another. For example, Java, JavaScript, AppleScript, Dylan, C#. The reason is mostly to make money by creating a language that solves perceived problems or need, as innovation. The problem may or may not actually exist. (C# is a language created primarily to overrun Java. Java was created first as a language for embedded devices, then Sun Microsystems pushed it to ride the internet wave to envision “write once run everywhere” and interactivity in web browser. In hindsight, Java's contribution to the science of computer languages is probably just a social one, mainly in popularizing the concept of a virtual machine and automatic memory management (so-called Garbage Collection), and further popularizing OOP after C++.)

Infinite Number of Syntax and Semantics

Looking at some tens of languages, one might think that there might be some unifying factor, some unifying theory or model, that limits the potential creation to a small set of types, classes, models. With influence from Stephen Wolfram book “A New Kind of Science” [see Notes on A New Kind of Science (Cellular Automata, Computation Systems)] , i'd think this is not so. That is to say, different languages are potentially endless, and each can become quite useful or important or with sizable user base. In other words, i think there is no theoretical basis that would govern what languages will be popular due to its technical/mathematical properties. Perhaps another way to phrase this imprecise thought is that, languages will keep proliferating, and even if we don't count languages that created by one-man's fancy, there will still probably be forever birth of languages, and they will all be useful or solve some niche problem, because there is no theoretical or technical reason that sometimes in the future there would be one language that can be fittingly used to solve all computing problems.

Also, the possibilities of language's syntax are basically unlimited, even considering the constraint that they be practical and human readable. So, any joe, can potentially create a new syntax. The syntax of existing languages, when compared to the number of all potentially possible (human readable) syntax, are probably a very small fraction. That is to say, even with so many existing languages today with their wildly differing syntax, we probably are just seeing a few pixels in a computer screen.

Also note here all languages mentioned here are all plain-text linear ones. Spread sheet and visual programing languages would be example of 2D syntax… but i haven't thought about how they can be classified as syntax.

Just some extempore thoughts.

Addendum

Pure

A pattern matching, term-rewriting lang.

Pure is a dynamically typed, functional programming language based on term rewriting. It has facilities for user-defined operator syntax, macros, multiple-precision numbers, and compilation to native code through the LLVM. It is the successor to the Q programming language.

The syntax of Pure resembles that of Miranda and Haskell, but it is a free-format language and thus uses explicit delimiters (rather than indentation) to indicate program structure.

fib 0 = 0; fib 1 = 1; fib n = fib (n-2) + fib (n-1) if n>1;

// Better (tail-recursive and linear-time) version: fib n = fibs (0,1) n with fibs (a,b) n = if n<=0 then a else fibs (b,a+b) (n-1); end;

Vala (2006), Genie

Vala is an object-oriented programming language with a self-hosting compiler that generates C code and uses the GObject system.

Vala is syntactically similar to C# and includes several features such as: anonymous functions, signals, properties, generics, assisted memory management, exception handling, type inference, and foreach statements.[2] Its developers Jürg Billeter and Raffaele Sandrini aim to bring these features to the plain C runtime with little overhead and no special runtime support by targeting the GObject object system.

https://wiki.gnome.org/Projects/Vala

Vala - Compiler for the GObject type system

Vala is a programming language that aims to bring modern programming language features to GNOME developers without imposing any additional runtime requirements and without using a different ABI compared to applications and libraries written in C.

Genie

https://wiki.gnome.org/action/show/Projects/Genie

Genie is a programming language, in the same vein as Vala. Genie allows for a more modern programming style while being able to effortlessly create and use GObjects natively.

The syntax is designed to be clean, clear and concise and is derived from numerous modern languages like Python, Boo, D language and Delphi. For a sample, take a look at instance.gs from the Khövsgöl project.

Genie is very similar to Vala in functionality but differs in syntax allowing the developer to use cleaner and less code to accomplish the same task.

Factor (2003)

Factor is a stack-oriented programming language created by Slava Pestov. Factor is dynamically typed and has automatic memory management, as well as powerful metaprogramming features. The language has a single implementation featuring a self-hosted optimizing compiler and an interactive development environment. The Factor distribution includes a large standard library.

The Fantom Language, and a Scathing Review of Scala

There are endless languages. Just discovered Fantom.

Fantom is a general purpose object-oriented programming language created by Brian and Andy Frank[4] that runs on the Java Runtime Environment (JRE), JavaScript, and the .NET Common Language Runtime (CLR) (.NET support is considered “prototype”[5] status).

Its primary design goal is to provide a standard library API[6] that abstracts away the question of whether the code will ultimately run on the JRE or CLR.

Like C# and Java, Fantom uses a curly brace syntax.

The language supports functional programming through closures and concurrency through the Actor model. Fantom takes a “middle of the road” approach to its type system, blending together aspects of both static and dynamic typing.

Also, there's this scathing review of Scala by someone who seems to be a Java platform expert.

Scala feels like EJB 2, and other thoughts By Stephen Colebourne. @ http://blog.joda.org/2011/11/scala-feels-like-ejb-2-and-other.html

Go (2009)

- Go began in 2009, created by Robert Griesemer, Rob Pike, Ken Thompson.

- Go is a low-level programing language, originally designed to replace C++.

- Syntax is like C

- Speed similar to C, with memory management

- its ease of use somewhat like a scripting language.

Rust (2010)

Rust, came out in 2010.

Rust is a systems programming language.

Rust is a systems programming language sponsored by Mozilla which describes it as a “safe, concurrent, practical language” , supporting functional and imperative-procedural paradigms. Rust is syntactically similar to C++, but its designers intend it to provide better memory safety while still maintaining performance.

The language grew out of a personal project started in 2006 by Mozilla employee Graydon Hoare, who stated that the project was possibly named after the rust family of fungi. Mozilla began sponsoring the project in 2009 and announced it in 2010. The same year, work shifted from the initial compiler (written in OCaml) to the self-hosting compiler written in Rust. Named rustc, it successfully compiled itself in 2011. rustc uses LLVM as its back end.

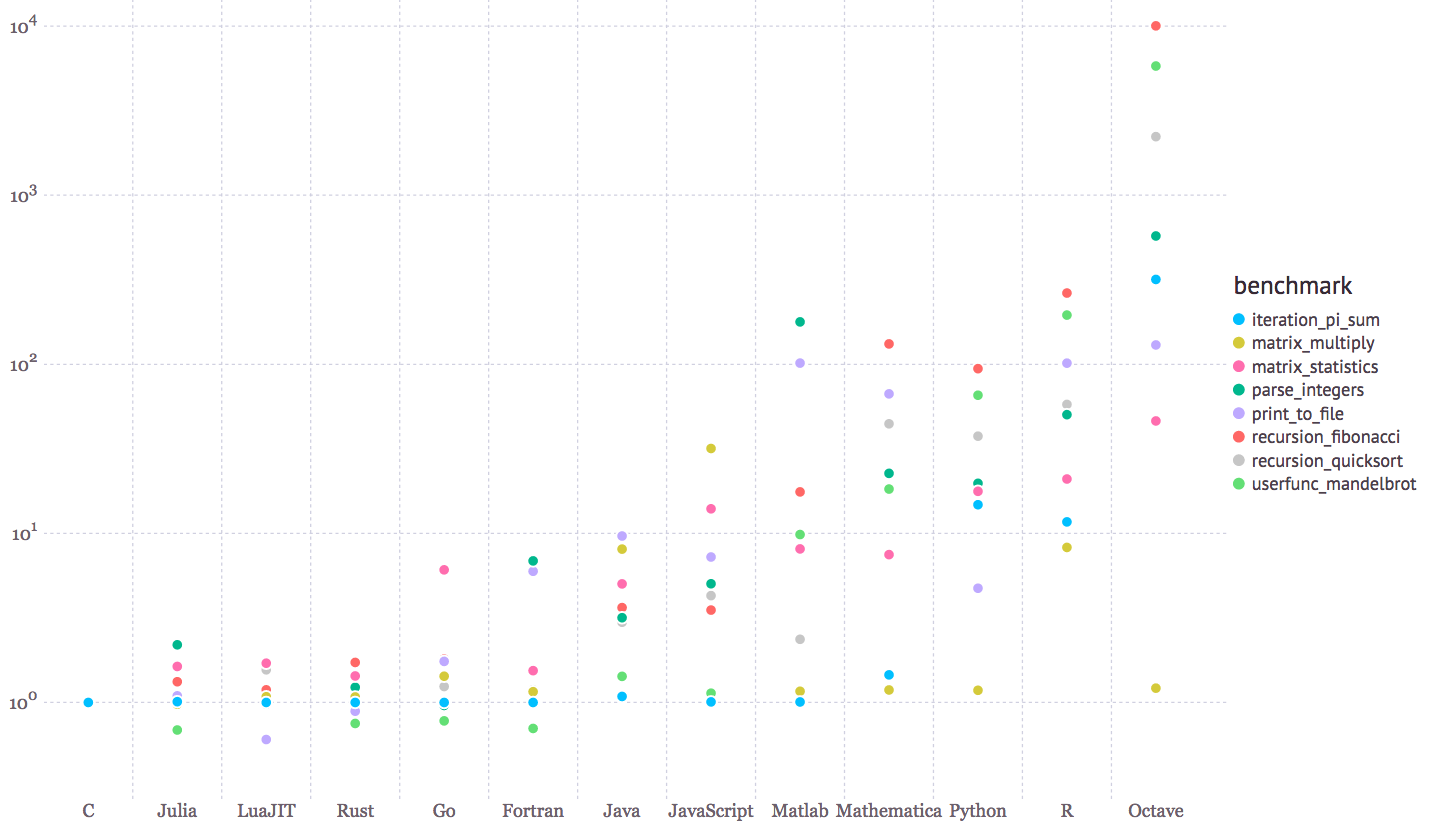

Julia (2011)

Julia is a new lang for scientific computing. New around 2011.

it's a competitor to number crunchers R, Fortran, MATLAB, etc, but as fast as C.

CoffeeScript (2009)

One of the earlist wrapper language of JavaScript.

Basically, JavaScript with a syntax skin.

Dart (2011)

Dart came out in 2011. It is basically typed JavaScript in Java syntax AND semantics.

import 'dart:async'; import 'dart:html'; import 'dart:math' show Random; // We changed 5 lines of code to make this sample nicer on // the web (so that the execution waits for animation frame, // the number gets updated in the DOM, and the program ends // after 500 iterations). main() async { print('Compute π using the Monte Carlo method.'); var output = querySelector("#output"); await for (var estimate in computePi().take(500)) { print('π ≅ $estimate'); output.text = estimate.toStringAsFixed(5); await window.animationFrame; } } /// Generates a stream of increasingly accurate estimates of π. Stream<double> computePi({int batch: 100000}) async* { var total = 0; var count = 0; while (true) { var points = generateRandom().take(batch); var inside = points.where((p) => p.isInsideUnitCircle); total += batch; count += inside.length; var ratio = count / total; // Area of a circle is A = π⋅r², therefore π = A/r². // So, when given random points with x ∈ <0,1>, // y ∈ <0,1>, the ratio of those inside a unit circle // should approach π / 4. Therefore, the value of π // should be: yield ratio * 4; } } Iterable<Point> generateRandom([int seed]) sync* { final random = new Random(seed); while (true) { yield new Point(random.nextDouble(), random.nextDouble()); } } class Point { final double x, y; const Point(this.x, this.y); bool get isInsideUnitCircle => x * x + y * y <= 1; }

TypeScript (2012)

http://www.typescriptlang.org/

Microsoft created TypeScript in 2012.

TypeScript is a typed superset of JavaScript that compiles to plain JavaScript.

asm.js

Then, in ~, asm.js is created. Home page at http://asmjs.org/ .

It's a subset of JavaScript that will make it run very fast, as in C/C++. It's a subset, so it runs in any browser. The typical application is for games written in C/C++ be compiled into asm.js and run (fast enough) browser. The UNREAL engine has been ported to asm.js.

Here's some blogs about it:

one of the asm.js creator:

- What asm.js is and what asm.js isn't

- By Alon Zakai.

- http://mozakai.blogspot.com/2013/06/what-asmjs-is-and-what-asmjs-isnt.html

JQuery author:

- Asm.js: The JavaScript Compile Target

- By John Resig.

- http://ejohn.org/blog/asmjs-javascript-compile-target/

- asm.js: A Low Level, Highly Optimizable Subset of JavaScript for Compilers

- By Devon Govett.

- http://badassjs.com/post/43420901994/asm-js-a-low-level-highly-optimizable-subset-of

OMeta

A lang based on Parsing Expression Grammar, called OMeta, by Alessandro Warth.

- OMeta: an Object-Oriented Language for Pattern Matching

- By Alessandro Warth.

- http://tinlizzie.org/ometa/

[Tom Novelli https://plus.google.com/108241330104058929067/posts] provided the following insights and links:

Yeah, that seems promising. there's actually a long history behind it; see for example

- Pragmatic Parsing in Common Lisp

- By Henry G Baker, Nimble Computer Corporation.

http://home.pipeline.com/~hbaker1/Prag-Parse.htmlI found a copy of Val Schorre's META-II paper from 50 years ago… and some followup work throughout the 1960s (For example, TREE-META)… these guys were in Douglas Englebart's group. Then apparently the military took it over and made it classified, probably ruined it with bureaucracy rather than developing it into awesome top-secret technology, heh ☺

- The programming languages behind “the mother of all demos”

- By Ehud Lamm.

- http://lambda-the-ultimate.org/node/3122

- TREE-META Manual By F R A Hopgood. @ http://www.chilton-computing.org.uk/acl/literature/manuals/tree-meta/contents.htm

Elm (2012)

Elm is a functional programming language for declaratively creating web browser based graphical user interfaces. Elm uses the Functional Reactive Programming style and purely functional graphical layout to build user interface without any destructive updates.

Elm was designed by Evan Czaplicki as his thesis in 2012. The first release of Elm came with many examples and an online editor that made it easy to try out in a web browser.[3] Evan Czaplicki now works on Elm at Prezi.[4]

The initial implementation of the Elm compiler targets HTML, CSS, and JavaScript.[5] The set of core tools has continued to expand, now including a REPL,[6] package manager,[7] time-traveling debugger,[8] and installers for Mac and Windows.[9] Elm also has an ecosystem of community created libraries.[10]

Haxe

Haxe is an open source high-level multi-platform programming language and compiler that can produce applications and source code for many different platforms from a single code-base.[1][2][3][4]

Haxe includes a set of common functionality that is supported across all platforms, such as numeric data types, text, arrays, binary and some common file formats.[2][5] Haxe also includes platform-specific API for Adobe Flash, C++, PHP and other languages.[2][6]

Code written in the Haxe language can be source-to-source compiled into ActionScript 3 code, JavaScript programs, Java, C#, C++ standalone applications, Python, PHP, Apache CGI, and Node.js server-side applications.[2][5][7]

Haxe is also a full-featured ActionScript 3-compatible Adobe Flash compiler, that can compile a SWF file directly from Haxe code.[2][8] Haxe can also compile to Neko applications, built by the same developer.

Major users of Haxe include TiVo, Prezi, Nickelodeon, Disney, Mattel, Hasbro, Coca Cola, Toyota and BBC.[9][10] OpenFL and Flambe are popular Haxe frameworks that enable the creation of multi-platform content from a single codebase.[10] With HTML5 dominating over Adobe Flash in recent years, Haxe, Unity and other cross-platform tools are increasingly necessary to target modern platforms while providing backward compatibility with Adobe Flash Player.[10][11]

Hacklang (2014)

Facebook created a new lang: hacklang. Basically, it's PHP with optional type system, with type inference. Designed to run existing PHP code as much as possible. Already deployed in large scale by Facebook.

I think this is fantastic.

announcement:

- Hack: a new programming language for HHVM

- By Julien Verlaguet, Alok Menghrajani.

- https://code.facebook.com/posts/264544830379293/hack-a-new-programming-language-for-hhvm/

home page http://hacklang.org/

egison (2011)

egison, pattern matching language.

Swift (2014)

Swift is a multi-paradigm, compiled programming language created by Apple Inc. for iOS, OS X, and watchOS development. Swift is designed to work with Apple's Cocoa and Cocoa Touch frameworks and the large body of existing Objective-C code written for Apple products. Swift is intended to be more resilient to erroneous code (“safer”) than Objective-C, and also more concise. It is built with the LLVM compiler framework included in Xcode 6, and uses the Objective-C runtime, allowing C, Objective-C, C++ and Swift code to run within a single program.[8]

Elixir (2011)

Elixir is a functional, concurrent, general-purpose programming language that runs on the Erlang virtual machine (BEAM). Elixir builds on top of Erlang to provide distributed, fault-tolerant, soft real-time, non-stop applications but also extends it to support metaprogramming with macros and polymorphism via protocols.[3]

- A language that compiles to bytecode for the Erlang Virtual Machine (BEAM)[5]

- Everything is an expression[5]

- Erlang functions can be called from Elixir without run time impact, due to compilation to Erlang bytecode, and vice versa

- Meta programming allowing direct manipulation of AST[5]

- Polymorphism via a mechanism called protocols. Like in Clojure, protocols provide a dynamic dispatch mechanism. However, this is not to be confused with multiple dispatch as Elixir protocols dispatch on a single type.

- Support for documentation via Python-like docstrings in the Markdown formatting language[5]

- Shared nothing concurrent programming via message passing (Actor model)[6]

- Emphasis on recursion and higher-order functions instead of side-effect-based looping

- Lightweight concurrency utilizing Erlang's mechanisms with simplified syntax (e.g. Task)[5]

- Lazy and async collections with streams

- Pattern matching[5]

- Unicode support and UTF-8 strings

- A Week with Elixir

- By Joe Armstrong (Creator Of Erlang).

http://joearms.github.io/2013/05/31/a-week-with-elixir.html

Kotlin (2011)

New language, Kotlin, from JetBrains. Runs on Java Virtual Machine. Similar to Java, but designed to improve java.

Kotlin is a statically-typed programming language that runs on the Java Virtual Machine and also can be compiled to JavaScript source code. Its primary development is from a team of JetBrains programmers based in Saint Petersburg, Russia (the name comes from the Kotlin Island, near St. Petersburg).

While not syntax compatible with Java, Kotlin is designed to interoperate with Java code and is reliant on Java code from the existing Java Class Library, such as the collections framework.

nim (2008)

Nim in is python syntax with modernized C-like semantics and speed.

Nim came out in 2008.

Crystal (2014)

Ruby syntax but fast like C. Began in 2014.

pyret

- Pyret is designed to serve as an outstanding choice for programming education while exploring the confluence of scripting and functional programming.

- It's under active design and development.

- pyret, from the Racket Scheme Lisp group.

- pyret is basically Racket Scheme Lisp in Python syntax.

- pyret spells the end of Scheme era, and the end excellence of the Rice University Programing Language Team (PLT).

- one of said reason for pyret is to experiment with syntax.

- It dispells the lisp myth about how lisp macro/reader can change syntax.

sample pyret code:

fun to-celsius(f): (f - 32) * (5 / 9) end for each(str from [list: "Ahoy", "world!"]): print(str) end

PureScript

PureScript is a small strongly typed programming language that compiles to JavaScript

Reason ML

ReasonML is OCaml in JavaScript syntax. Created by Facebook.

P language

P is a language for asynchronous event-driven programming. P allows the programmer to specify the system as a collection of interacting state machines, which communicate with each other using events. P unifies modeling and programming into one activity for the programmer. Not only can a P program be compiled into executable code, but it can also be validated using systematic testing. P has been used to implement and validate the USB device driver stack that ships with Microsoft Windows 8 and Windows Phone. P is also suitable for the design and implementation of networked, embedded, and distributed systems.

LEAN Lang

L∃∀N

Lean is a new open source theorem prover being developed at Microsoft Research, and its standard library at Carnegie Mellon University. The Lean Theorem Prover aims to bridge the gap between interactive and automated theorem proving, by situating automated tools and methods in a framework that supports user interaction and the construction of fully specified axiomatic proofs. The goal is to support both mathematical reasoning and reasoning about complex systems, and to verify claims in both domains.

The Lean project was launched by Leonardo de Moura at Microsoft Research in 2013. It is a collaborative open source project, hosted on GitHub. Contributions to the code base and library are welcome.

You can experiment with an online version of Lean that runs in your browser, or use an online tutorial.

[2016-12-26 http://leanprover.github.io/about/]

functional programming language with a proven-correct compiler and runtime system.

someone asked why not perl6, and i said it's 18 years late and too many other interesting languages today. He asked for examples.

several classes to consider:

- does it have C speed? eg golang rust julia nim crystal

- can it do symbolic term rewriting? Mathematica, lisp.

- can it define operators, do matrix computation? e.g. array langs. Mathematica, apl, matlab, julia.

- can it do logic programing? prolog, mercury.

- can it do parallel and currency? e.g. goglang, clojure, erlang, alice.

- can it do math proofs? e.g. coq, agda, idris.

etc.

Microsoft Bosque Language

https://github.com/Microsoft/BosqueLanguage

beyond_structured_report_v2.pdf

The language is designed based on TypeScript syntax and JavaScript semantics.

The language is supposed to avoid the following problems they called complexity

- multable states and frames. → no mutable variable.

- loops, recursion, and invariants. → the language has no loop construct. instead, use functions like map, forEach etc.

- indeterminate behaviors → e.g. keywords like undefined, null, and others.

- Data Invariant Violations → stop updating array etc elements. provide a more controlled way to do so.

- Equality and Aliasing → reference equality, value equality, etc.