Xah Programing Blog

- quite amazing what we have.

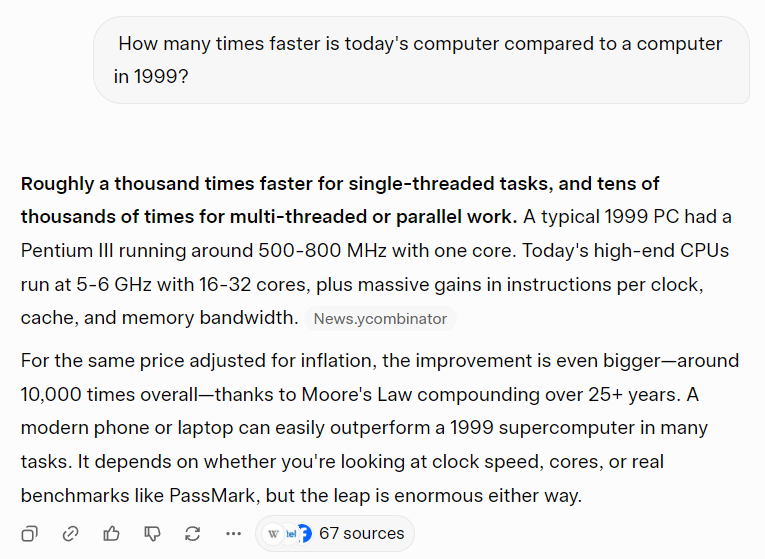

- How many times faster is today's computer compared to a computer in 1999?

- about one thousand.

- misery.

- the ligature lovers create complexity.

- and when you work with real unicode math symbols → ≥ ≠ ≈ ≡ ⇒ ⟺ ∧ ∨, you get deth.

- updated.

- the nasty, is that many follow unicode recommendation, of allowing only letters in function or variable names.

- this prevents you from using ⊕ ∑ ° ∞.

ai generated videos, often have a non-sensical problem. ie people seems to be looking at slightly off the subject they meant to be looking at , or weird approximation of some action...

repost

- C Is Not a Low-level Language, by David Chisnall 2018

- C and Go, Models of Computation. by David Chisnall. 2012

new articles. pulled out from Programing Language and Its Machine (2024)

mino updates

ok , for prog langs, these i find great:

- History of Object Oriented Programing, by Casey Muratori (2025)

- Guy Steele on Parallel Programing. (Get rid of lisp cons) 2011

- Rust in Perspective. Programing Language History. By Linus Walleij (2022)

- Python vs OCaml (2014)

- What Happens When You Replace C by Go in Linux (2024)

- Programing Language and Its Machine (2024)

ok that's it 😊

updates

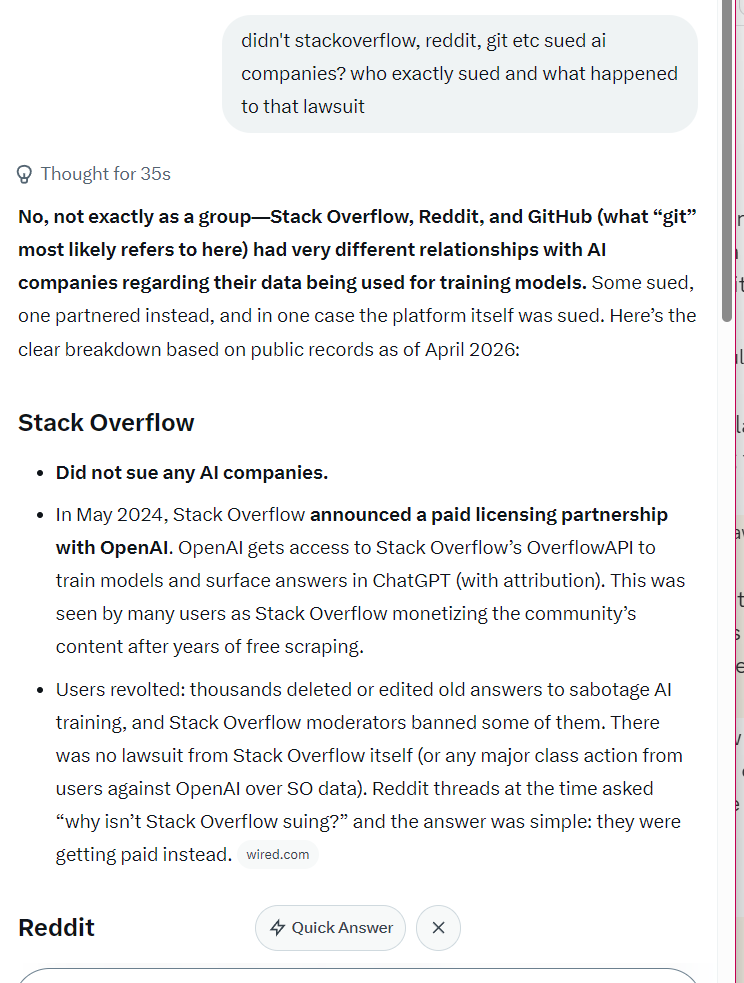

- didn't stackoverflow, reddit, git etc sued ai companies? who exactly sued and what happened to that lawsuit

- https://x.com/i/grok/share/bd5e21d371e4433b893c07a626ee57fa

it's a bloodbath.

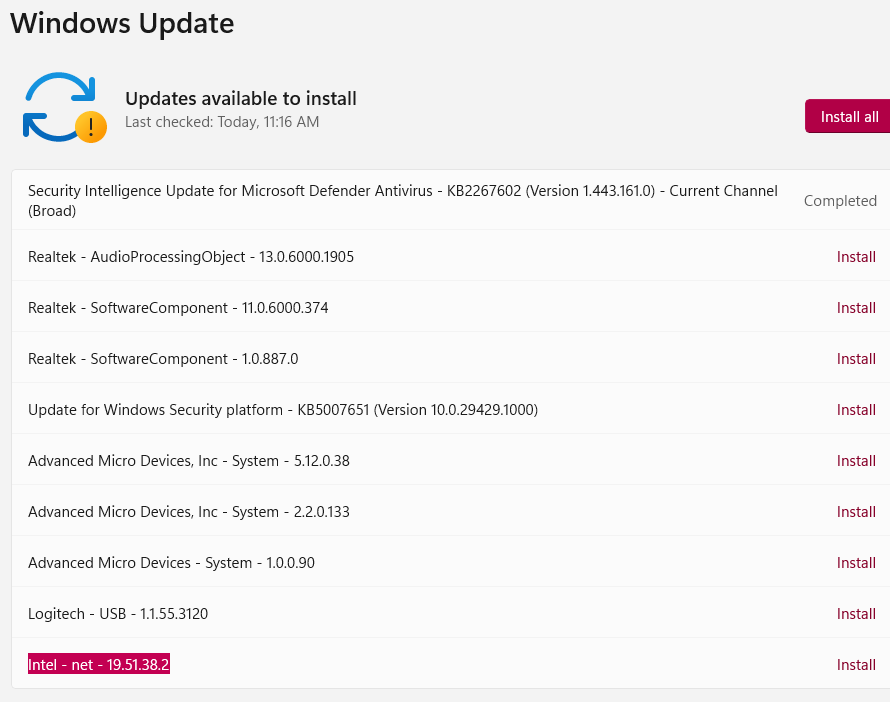

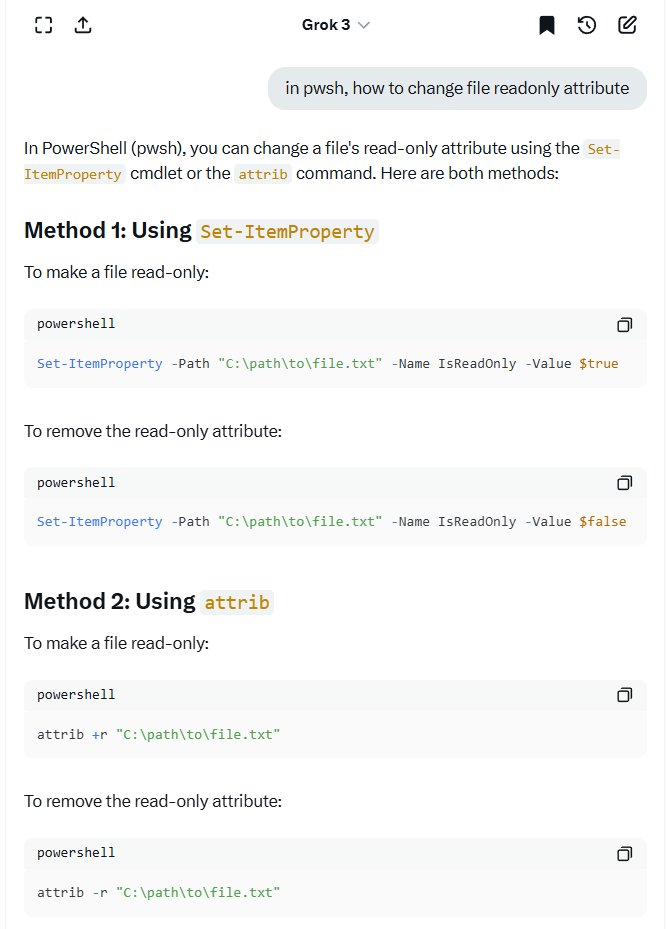

- gosh, how windows sucks.

- you cant have file path longer than 260 chars.

- (folders plus file name)

- https://x.com/xah_lee/status/2044233940236746819

- what is the allowed max file name char count?

- and what is the max length of allowed file full path?

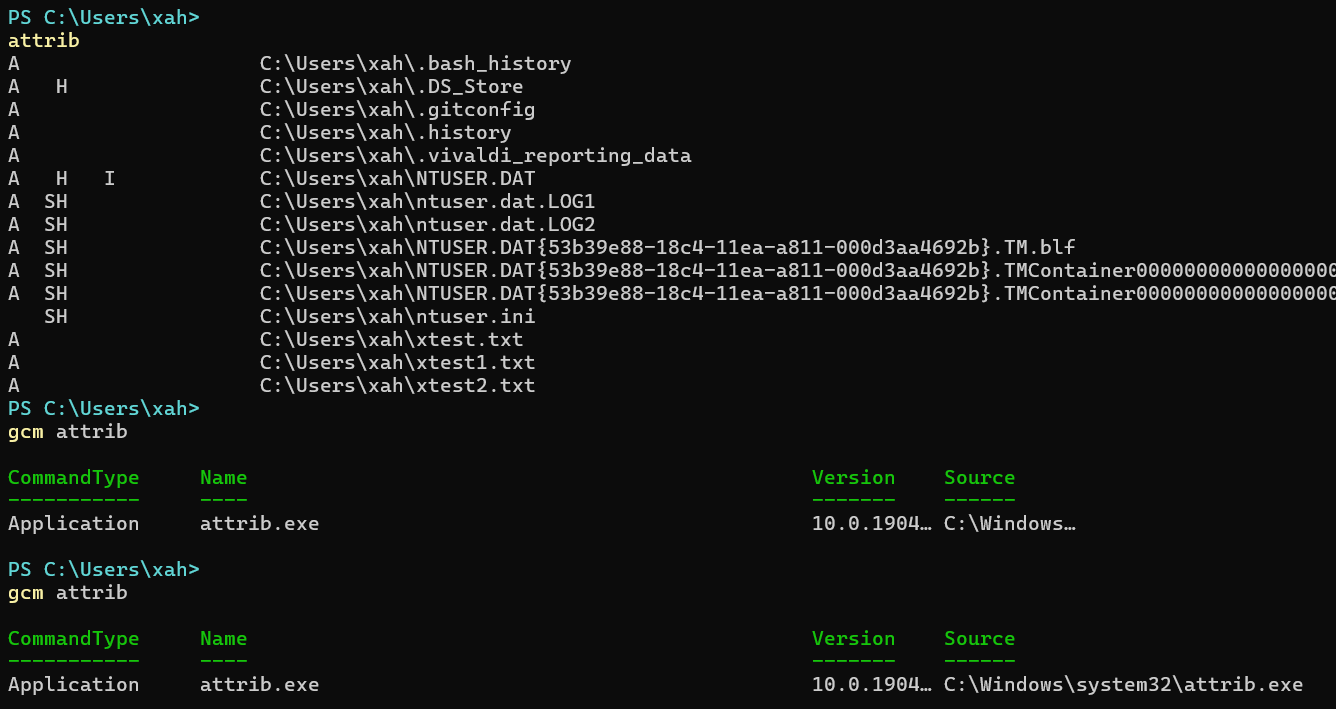

how to determine if i have long path feature enabled on windows11

Get-ItemProperty -Path "HKLM:\SYSTEM\CurrentControlSet\Control\FileSystem" | Select-Object LongPathsEnabled

Set-ItemProperty -Path "HKLM:\SYSTEM\CurrentControlSet\Control\FileSystem" -Name "LongPathsEnabled" -Value 1 -Type DWord

you can't do math in livestream, because every second, you have many thoughts, each second of thought that flashes thru your mind, takes a minute or more to actually explain.

in livestream, you can only do math that you already know, or solve problems you already family with.

similarly is coding math.

here's a quick take why the python json module doc is extremely idiotic. https://docs.python.org/3/library/json.html

First paragraph with many links to RFC and ECMA-404 is unnecessary. Move reference links to bottom.

Warning box about reading untrust json is unnecessary, move it bottom.

Third paragraph about “json exposes and marshal and pickle”, is unnecessary. move to bottom.

People check the python json doc, 99% they want to use it. not listen to your pet peeves and steril warnings. fuck python and fuck guido fuckhead. (my opinion only)

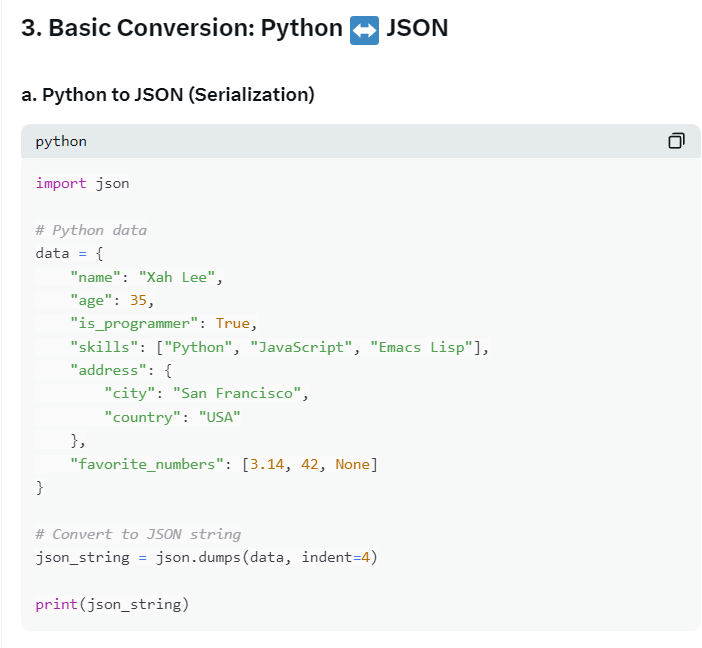

4th paragraph says: “encoding basic python object hierachies”, is completly motherfucking stilted and pretentious writing. example better is: “basic example of converting python object to json”.

the example, using python interactive command line, is extremely idiotic. hard to read. most coders viewing doc wanna see real code, not interactive prompt. plus they can copy and paste.

etc.

- asking grok ai to write a python tutorial for json.

- it creates personalized examples. lol.

- fucking amazing. basically, the grok in twitter learns about you.

- since this year, every thing you ask, it creates personalized answers.

YouTube ai answers.

What topics are my viewers watching and talking about?

Based on recent viewer engagement and comments on your channel, Xah Lee, your audience is deeply invested in niche programming paradigms, high-end ergonomic hardware, and your unique, often provocative, takes on tech philosophy.

Here’s a breakdown of what they are watching and talking about:

- Programming Languages & Lisp Flavors

- The "Lisp" debate remains a cornerstone of your community's interest. Viewers are actively comparing flavors and debating the merits of Common Lisp, Emacs Lisp, Scheme, and Clojure.

- The "Lisp" Identity: There is significant discussion around whether "modern" languages like Python are "acceptable Lisps" and whether Lisp's failure to unify into a single standard was "catastrophic."

- Wolfram Language: Your sessions on Wolfram Language (specifically during Advent of Code) are popular, with viewers noting its power but also its debugging frustrations.

- Modern vs. Niche: Topics like F#, Julia, and Nim pique curiosity, particularly regarding why they remain niche. comments

- Ergonomic Hardware & The "Keyboard Boss"

- Hardware reviews, particularly for high-end or experimental devices, generate intense engagement.

- Svalboard & Datahand: Your deep dive into the Svalboard has viewers fascinated, with many calling it the "final boss" of split keyboards and asking about technical specifics like typing speed and steno-mode.

- Ergo Comparisons: There is a constant demand for comparisons between the Glove80, UHK 80, and Kinesis Advantage. Viewers are particularly concerned about wrist health and silent switches.

- The "Vibe": Your audience enjoys the contrast between your high-tech ergo setups and your "crusty" Logitech G502 mouse. comments

- Tech Philosophy & "Rants"

- Your critiques of established tech norms are a major draw.

- The "Unix Cult": Your rants against the "Unix philosophy" and Linux "fanatics" are highly polarizing. Some viewers strongly defend the scientific origins of Unix, while others appreciate your "suckless" perspective.

- Coding Syntax: Specific technical critiques, like the "C printf crime" and your "rock-string" syntax proposal, spark deep architectural discussions among your most technical viewers.

- AI & The Future: There is growing chatter about how AI tools like Grok or DeepSeek change the necessity of learning specific language syntaxes by 2026. comments

- Community & Livestreams

- Viewers frequently check in for your next live session, though some express frustration with YouTube's notification system not alerting them when you go live. They also seem to enjoy the "random rants" and personal updates, like your recent haircut! comments

considering remove all official logos on my programing language tutorial pages. reason is, basically, it's copyrighted and must use with consideration. but also, it's common, jaded. if i use a custom artwork, it more stand out, but less recognizable.

- changed the unicode page icon ∑ Xah Code (XahLee.info)

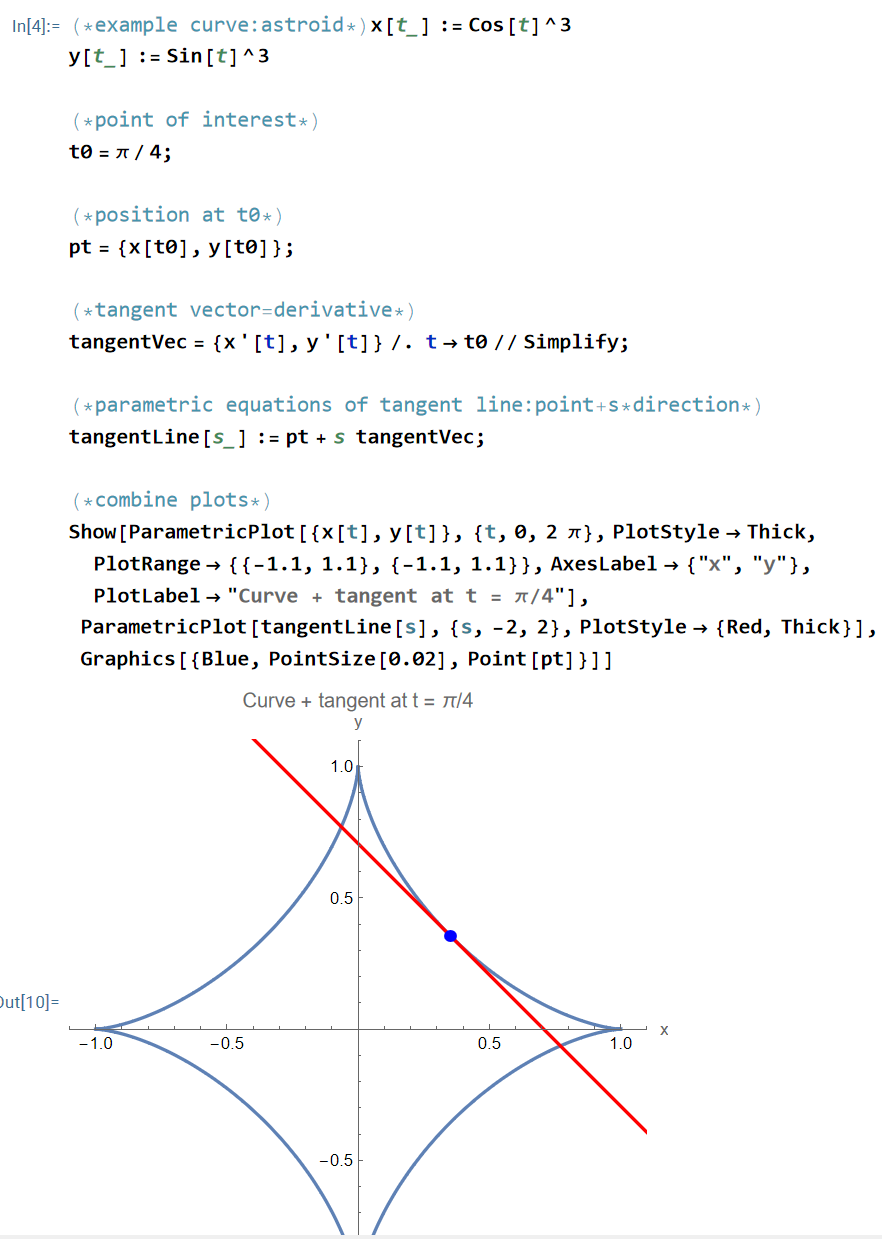

- omg. i was writing Wolfram language code to plot tangent.

- then i thougt i ask grok.

- it created code and is perfect!

- how to plot tangent of a parametric curve in wolfram

- https://x.com/i/grok/share/91882744ddc8492f862eb5772fd6efcb

(* example curve: astroid *) x[t_] := Cos[t]^3 y[t_] := Sin[t]^3 (* point of interest *) t0 = π/4; (* position at t0 *) pt = {x[t0], y[t0]}; (* tangent vector = derivative *) tangentVec = {x'[t], y'[t]} /. t -> t0 // Simplify; (* parametric equations of tangent line: point + s * direction *) tangentLine[s_] := pt + s tangentVec; (* combine plots *) Show[ ParametricPlot[{x[t], y[t]}, {t, 0, 2 π}, PlotStyle -> Thick, PlotRange -> {{-1.1, 1.1}, {-1.1, 1.1}}, AxesLabel -> {"x", "y"}, PlotLabel -> "Curve + tangent at t = π/4" ], ParametricPlot[tangentLine[s], {s, -2, 2}, PlotStyle -> {Red, Thick} ], Graphics[{Blue, PointSize[0.02], Point[pt]}] ]

{ Sqrt @ x, x // Cos, x |-> x+1, Function[x, 1/x], Function[1/#], ((1/#) &) } (* Wolfram language doesn't like prefix form f@x or the postfix form x//f. they get converted to brackets f[x]. the full named function form Function[x, expr] remain as is. but if it involves # as in Function[#+1] it get converted to the short form #+1 & short form of function (#+1 &) remain as is, but with redundant paren removed. the function form x |-> expr gets converted to Function[x, expr] form. unless in traditional form they are shown as x ↦ expr *)

- list major communication tech in internet history, in chronogical order, like email, newsgroup, mailing list, bbcode, instant message, wiki, website forum, social network etc

- https://x.com/i/grok/share/023368c83b14412195f7157c00ea5a21

Here is a chronological list of major communication technologies and platforms in internet/online history, focusing on those that enabled people to communicate, discuss, share, or socialize digitally (roughly from pre-web to modern eras).

I've included approximate introduction years and brief notes on their role/impact.

- Email (1971) → Ray Tomlinson sent the first network email on ARPANET → Became (and remains) the foundational asynchronous one-to-one / one-to-many communication tool.

- Mailing lists / Listservs (late 1970s–1980s) → Early automated email-based discussion groups (e.g. Listserv software in 1986 popularized them) → Group communication via broadcast emails, precursor to modern newsletters & discussion lists.

- Bulletin Board Systems (BBS) (late 1970s–1980s, peak ~1980s) → Dial-up systems (e.g. first in 1978) for message boards, file sharing, and local communities → Early "forums" before widespread internet access.

- Usenet / Newsgroups (1979–1980) → Decentralized discussion system with threaded newsgroups → One of the first global, many-to-many public discussion platforms (ancestor of web forums & Reddit-style threaded convos).

- Internet Relay Chat (IRC) (1988) → Real-time text chat in channels → The original instant messaging / group chat protocol, still used today.

- Early commercial online services (e.g. CompuServe CB Simulator 1980, AOL chat rooms ~1990s) → Proprietary real-time chat and forums → Brought chat & community to mainstream non-academic users.

- World Wide Web + personal/homepages (1991–1993) → Tim Berners-Lee's WWW → Enabled hyperlinked pages; early static sites often included guestbooks or simple contact forms.

- Web forums / Message boards (mid-1990s) → WWW-based threaded discussion boards (e.g. early software like WWW Interactive Talk ~1994, then phpBB, vBulletin ~1998–2000) → Replaced Usenet/BBS for most topic-based communities.

- BBCode (late 1990s) → Simple markup language for formatting posts in forums (introduced ~1998 in phpBB) → Made rich text (bold, quotes, images) easy in web forums without HTML knowledge.

- Instant Messaging (consumer level) (1996–1997) → ICQ (1996), AOL Instant Messenger (AIM, 1997), MSN Messenger (~1999), Yahoo! Messenger → One-to-one & small group real-time private chat, exploded in late 90s/early 2000s.

- Weblogs / Blogs (1997–1999) → Term "weblog" coined 1997, Blogger (1999) & Movable Type → Personal publishing + comment sections → Turned readers into participants.

- Wiki / collaborative editing (1995 first wiki by Ward Cunningham, but Wikipedia 2001) → Editable web pages by anyone → Radical shift to collective knowledge building & discussion via talk pages.

- Website forums (large-scale) (late 1990s–2000s) → Specialized community sites (e.g. Slashdot 1997, Something Awful, early Reddit-like) → Deep topic-specific discussions.

- Social network sites / Social media (1997–2000s) → SixDegrees.com (1997) → Friendster (2002) → MySpace (2003) → Facebook (2004) → Twitter/X (2006) → Instagram (2010) etc. → Shift to identity-centered, feed-based, multimedia, real-time social interaction.

- Modern group chat / messengers (2010s–) → WhatsApp (2009), Discord (2015), Telegram, Slack → Blended real-time chat, voice, communities, file sharing.

This sequence shows the evolution: from one-to-one email → group async (mailing lists, Usenet) → local dial-up communities (BBS) → global real-time text (IRC) → web-based persistent discussions (forums) → personal broadcasting with comments (blogs/wikis) → identity-driven social graphs (social networks) → always-on multimedia group chat.

Many overlapped and co-existed for years (e.g. email + Usenet + IRC in the 1990s, forums + early social media in 2000s). Let me know if you'd like deeper detail on any period or tool!

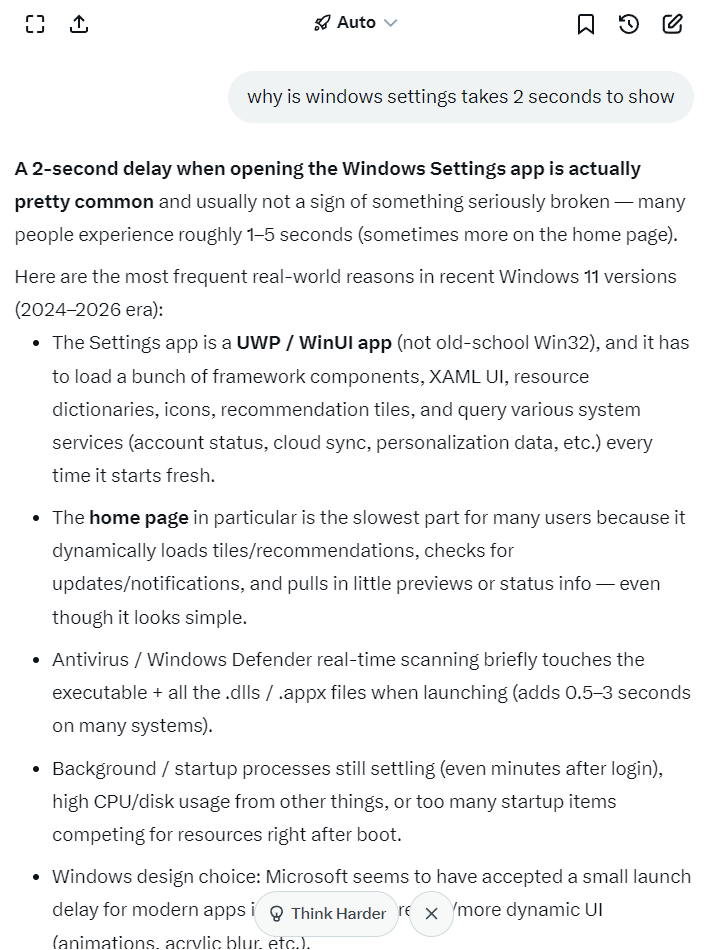

fuck Microsoft Windows

- why is windows settings takes 2 seconds to show

- https://x.com/i/grok/share/84b74dd96982461eaa94909c53c8a9e7

- which is more random, number from pseudo random function, or apply md5 to it.

- https://x.com/i/grok/share/240271cca39c4daa836bf03f8aaf69e5

- which is more random, number from non-cryptic pseudo random function, or md5 apply to current date time and last keystrokes and process id machine id etc.

- https://x.com/i/grok/share/9bd52b296ef9455886ac766f7ca8b361

- sudden relevation.

snake_caseis superior thancamelCase.- this is true in general.

- and in general, CAPITAL aka UPPERCASE, BIGCASE, should be banned.

- they are an abomination from history.

- also, recently, started to adopt the brit

- “Title letter casing convention, of just first word like a sentence.”

- instead of the american

- “Title Convention Letter Casing Each Words Unless the Word is 3 or Less Letters.”

On the Argument of CamelCase vs Snake_Case with Respect to Compactness.

- a bit complicated.

- first, on the argument of ease of typing the character.

- how easy to type should have no influence, for some purity consideration, because how easy to type a keyword letter layout and input system issues, which, we also stuck with shit qwerty, but we can also redesign.

- but on the other hand, for practical consideration, how easy to type a char has huge influence. it is why in part hyphen became more popular than lowline _.

- overall, whether it should be considered, i think is undecided.

- it depends on whether you are thinking we stay with qwerty and traditional ibm pc form keyboard, or, you think for what's best in future, e.g. today's lots ergo batman keyboards with thumb keys.

- about camelCase is more compact, right.

- i think that's a point.

- because often, the meaning of var name is not very significant, we just care for some short unique symbol.

- For example, the i in for-loop, or the x in lambda.

- or, some longer name that's used only twice, but very difficult to come up with describe terms what the variable is really logically represents.

- so, camelCase being short and compact, i think is an argument for it, instead of snake_case. because snake_case_names forces you to intreprete a meaning in the variable name.

- but, overall, if we consider English language or most western alphabetic languages, that capital letters is a historical happenstance and annoying rather not useful and is super confusing, then, we might do away with the argumentfor camelCase.

- on Microsoft Windows 11, in settings, why does it take few seconds to show up

- on Microsoft Windows 11, in settings, what's the name e.g. ms-7c51 that shows after my computer name

2022-08-13 removed google ads on xahlee.info

<script data-ad-client="ca-pub-5125343095650532" async src="https://pagead2.googlesyndication.com/pagead/js/adsbygoogle.js"></script>

the hackability of a lang is not the same as bad mistakes.

js is the worst lang by a magnitude, because:

- Designed for non-pro coders (aka html writers, called web monkeys back then.).

- Designed and written in ten days. (typeof null is object is best example here.)

- Designed based on scheme lisp and self, but forced to look and behave like java. (major fuckup)

- lots influence from the perl scam. e.g. sparse array situation.

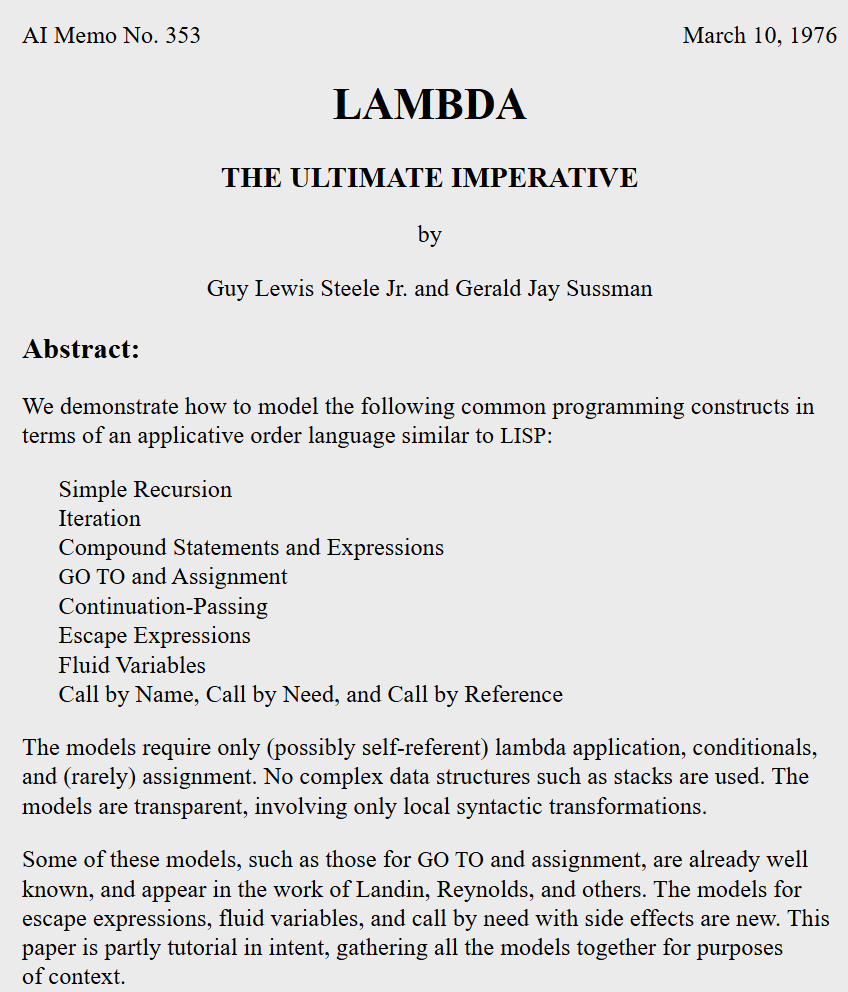

- LAMBDA THE ULTIMATE IMPERATIVE

- by Guy Lewis Steele Jr. and Gerald Jay Sussman

- 1976-03

Abstract: We demonstrate how to model the following common programming constructs in terms of an applicative order language similar to LISP:

- Simple Recursion

- Iteration

- Compound Statements and Expressions

- GO TO and Assignment

- Continuation-Passing

- Escape Expressions

- Fluid Variables

- Call by Name, Call by Need, and Call by Reference

- On understanding types, data abstraction, and polymorphism

- by Luca Cardelli, Peter Wegner

- December 1985

- Fundamental Concepts in Programming Languages

- by Christopher Strachey

- 1967

All these names suck. hash table, associative array, map, dictionary.

hash table → sucks because it's incomprehensible, and hash refers to an implementation, not what the thing is.

associative array → is idiotic. because it confuses with math concept of associative. Also, associative here means associating a value with a key, which is idiotic perspective. You might as well call it relational array because it creates a relation of values with keys. Best, is call it keyed array, key indexed array.

map → is not very good, because it confuses with the function “map”, and also the commonly english meaning of map, like street map. (the map in street map, derives from projection in math, which is what math map means, which also just means a function.) we might fix it by calling it “map data structure”, but still, not good, because here the map is confusive with verb, again, just like the function named map.

Dictionary → this is much better, as it is analogous to dictionary. Basically, you lookup an entry, for its value. The bad part is confusion with the actual dictionary as in, Webster English dictionary.

keyed list, keyed collection → i think this is the best. because it is descriptive of exactly what it is.

- comp lang: Hack of Bitmask as Boolean Parameters (2007)

- comp lang: the Hack of C Printf Format String

- comp lang: Bit Operators Idiocy

- comp lang: Comment Syntax

- comp lang: Should Array Index Start at 0 or 1?

- comp lang: Should Map Function Specify Order

- comp lang: Iterator, Enumerator, Abstraction Went Wrong (2016)

- comp lang: LISP Syntax Problem of Chaining Functions

- comp lang: C Cpp Java Coders Don't Know Scientific Programing Languages (2015)

- comp lang: Interning String, Symbol (2025)

- comp lang: is the Map Function Functional Programing in Syntax or Semantics

- comp lang: Why I Hate Exceptions

- obsession in writing detail.

- which phrase in more popular in programing, iterate thru list or loop thru list

- https://x.com/i/grok/share/5KItRSH8c8ro7NxKgMm4j9AVh

- can LEAN be used as a general purpose programing language like fsharp.

- sadly, no.

- https://x.com/i/grok/share/BGZ8AnmNDTGtYvkbjUNqnZmPq

- compare popularity, Agda Coq idris fstar lean

- https://x.com/i/grok/share/vCzLHOWXqsPUFNShQjHPgZEDR

- lazy eval should be banned. eg haskell, python iterator, JavaScript iterable.

- what are the problems of lazy eval in programming languages.

- https://x.com/i/grok/share/ovhTFBnYHH5dFJYPNSunQHa2p

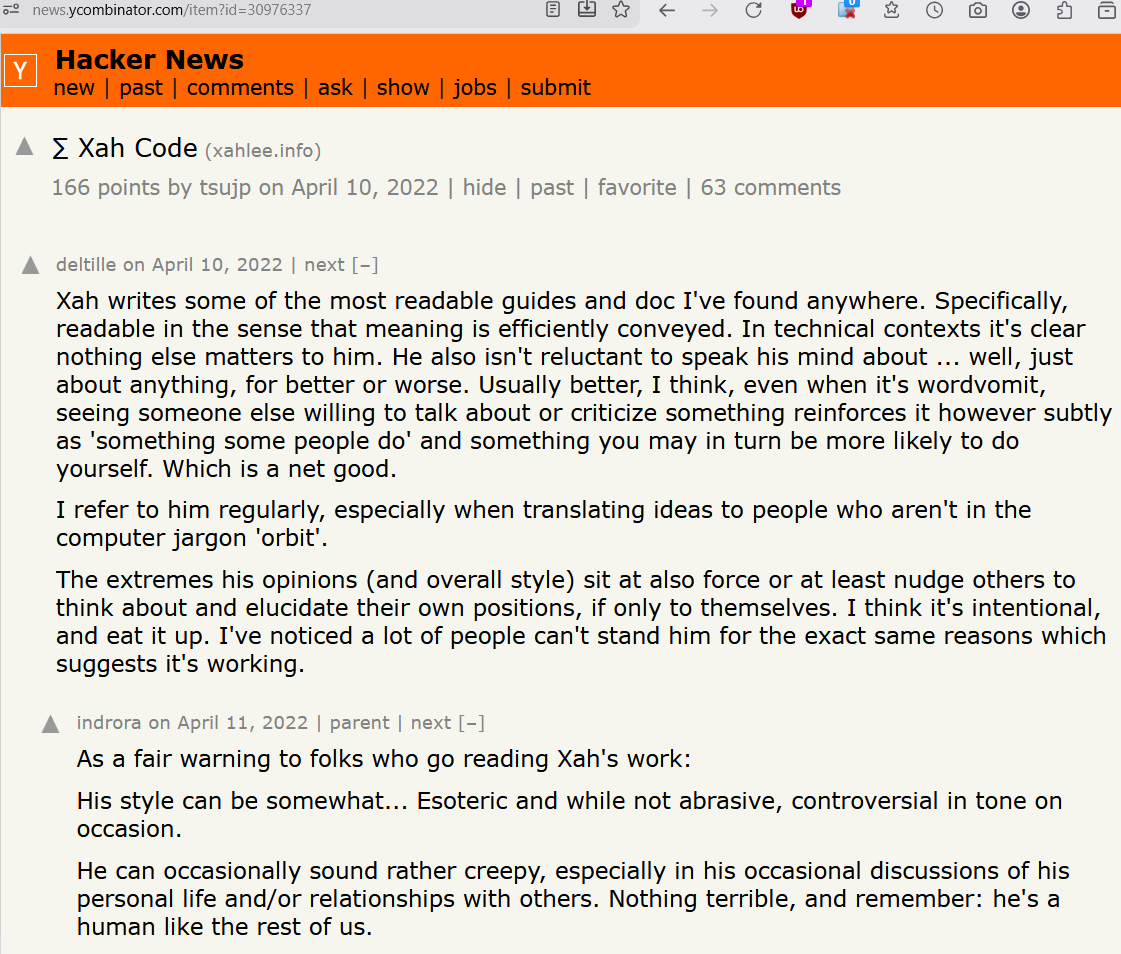

Hackernews on Xah Lee. 2022

- hackernews on me. 2022

- i got some fairly good vibes.

- hacker news on xah lee, 2022-04-10

- https://news.ycombinator.com/item?id=30976337

- how long does it take to solve each advent of code problem

- https://x.com/i/grok/share/ToX4nVCiDClUk0vR8j80VMiIs

- basically, for average coders, not geared for speed coding competition,

- 1 to 2 hours for easy early day problems.

- 2 to 4 hours for mid difficulty problems,

- more than 4 hours or days for the last days problems.

when you have a compiler that claims to compile JavaScript ES2015 code to JavaScript ES5, but does not support some objects in the ES2015 spec, such as map, set, symbols, then your compiler really isn't complete is it?

Fuck the JavaScript coders and industry idiots. Brainless sucking up to what corp tells them what is.

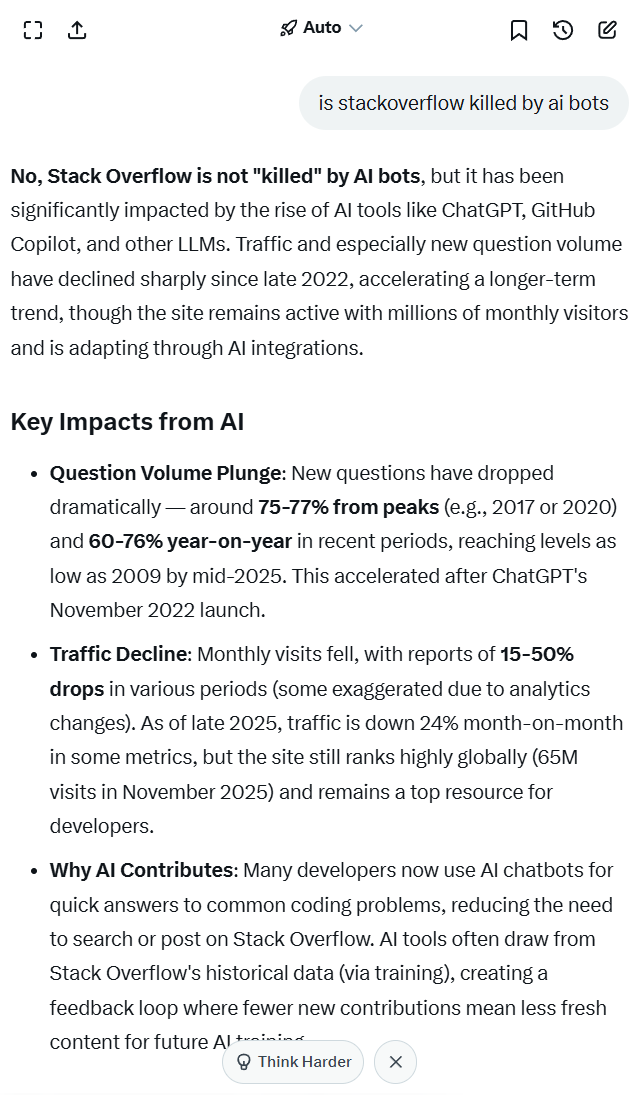

stackoverflow, last gasp

- is stackoverflow killed by ai bots

- https://x.com/i/grok/share/nJEa0VReiLtozZFIP35dXeF6m

many programing language, their variable name or function name allow only unicode letter characters. (including, go java JavaScript python perl ocaml haskell rust nim)

what is unicode letter? basically, no emoji, no math symbols, no dingbats, no punctuations. only characters that are in alphabet or chinese characters.

this is a major problem because that means you cannot use math operators such as sum ∑ or circle plus ⊕ as function name.

- what's the major diff of java and csharp

- https://x.com/i/grok/share/TsVpoE1snc1oNILDRJ4dyrjN0

- are there big 3d games written in csharp

- https://x.com/i/grok/share/6h4a9uRR7G1eMzw2cfoePAmfP

- list and describe programing languages that potentially replace c and cpp

- https://x.com/i/grok/share/yo87uvHl8H6yqAs0MROJJGh46

now that text recognization from image is part of windows 11, i have no use of powertoys anymore.

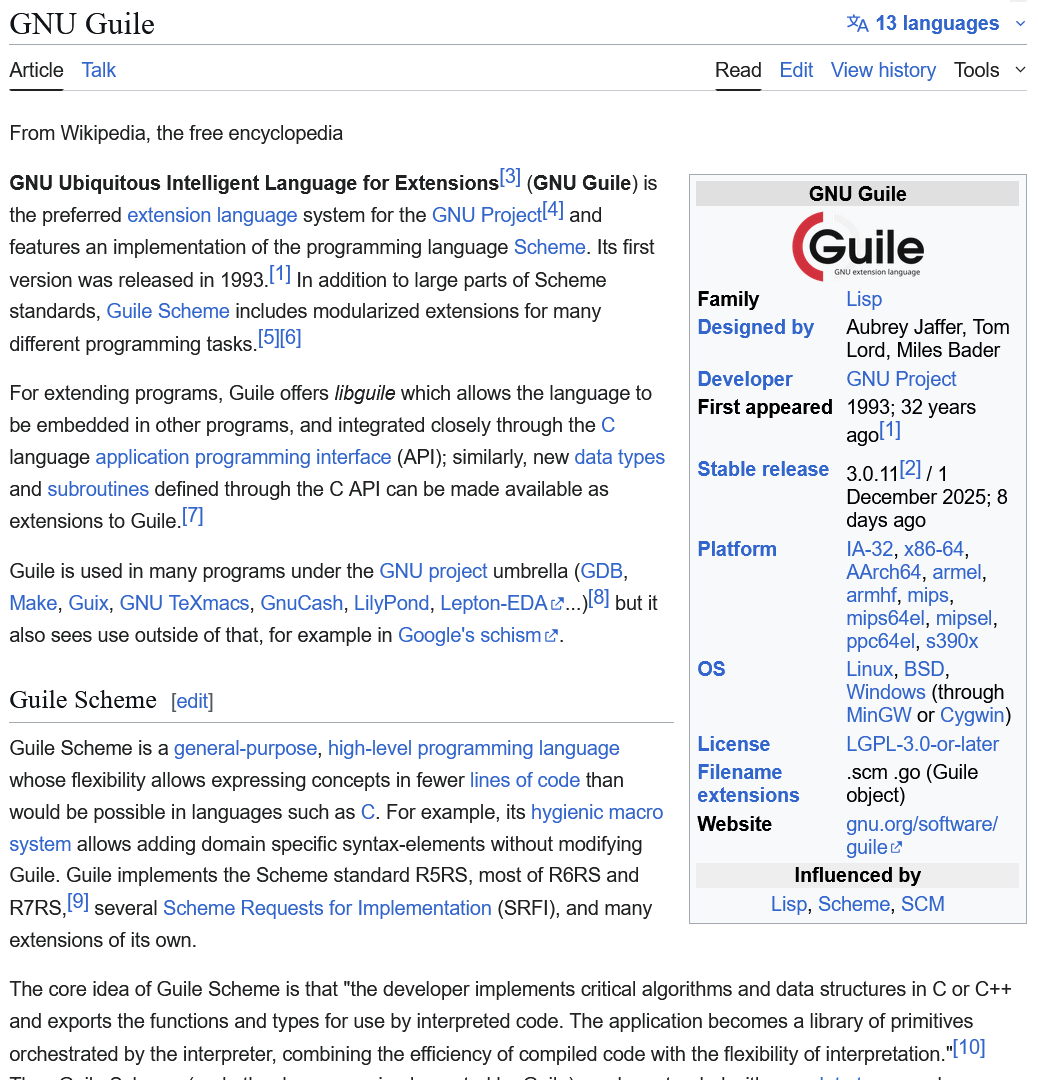

The Surviving Scheme is Now Guile Scheme Lisp. Replacing Racket Scheme Lisp.

- this marks one chapter in lisp history.

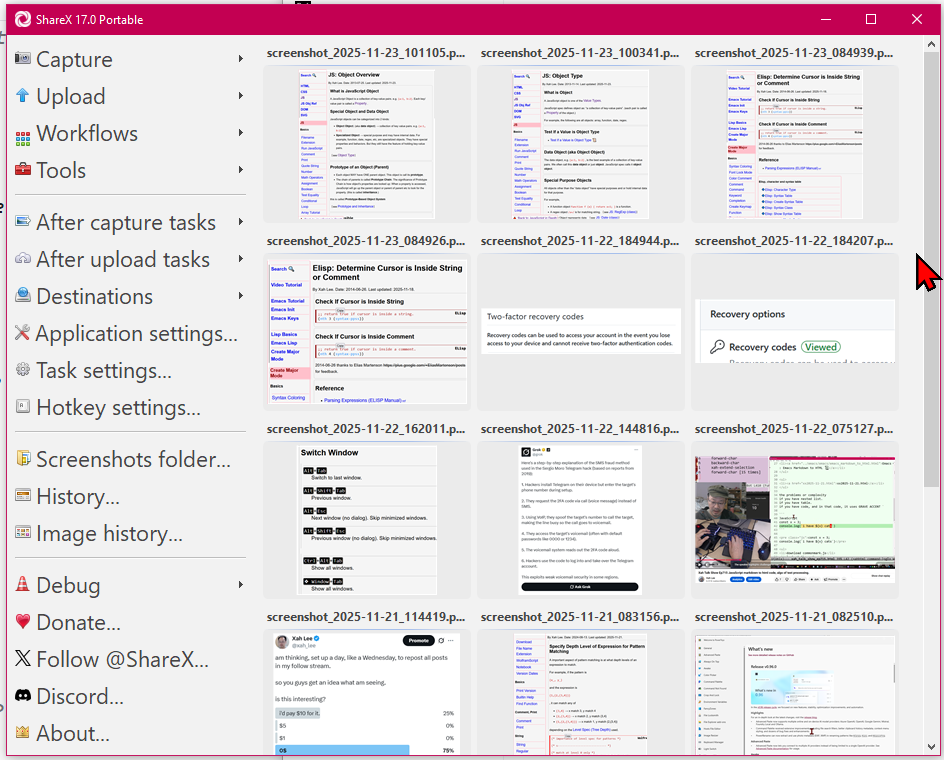

- how to stop sharex from saving any thumbnail or screenshot history at all?

- i disabled history, but it sill show thumbnails.

currently using my biological intelligence to understand 2fa, passkey, authenticator app, Hardware security keys. very complex stuff.

- what language is twitter written in

- https://x.com/i/grok/share/ov8Qqy16v1qpBBrgbhez0rSpQ

Usually, in programing languages or any computer related stuff, you ask why something is this way, vast majority of experts are unable to answer, they don't know.

There are people who know it, but very rare. Typically they are people who are active at the time certain design or way is formed, and are active participants.

This applies to programing language, protocols, user interface, keyboards, music notation, banking, check, credit card, etc.

- what does this do exactly. Enteprise Security Management tool

- https://x.com/i/grok/share/aaixTiScKwHOlCBHfIX4ilZDG

- sys admin in a lunchbox american style

today, phone and tablet almost never shutdown or restart. how does the software manage memory leak or system resource usage growing

- Xah Talk Show 2025-11-04 Ep709 Wolfram Language Coding and Tutorial, List Operation. Emacs Workflow.

- Wolfram: List Operations

- Wolfram: List. Join, Union, Intersection, Difference

- Wolfram: List. Table (Create List, Nested Array)

- Wolfram: Range (Create Flat List)

- Wolfram: List. Get Element by Index (Part, Take, Drop, First, Last, Most, Rest)

- Wolfram: List. Add Element

- Wolfram: List. Delete Element

- Wolfram: List. Select (Filter)

- Wolfram: List. Change Element

- Wolfram: Pattern Syntax

- Wolfram: List. Level (Depth)

updates

- Computer Science. Unix and Linux (and macOS and Microsoft Windows too).

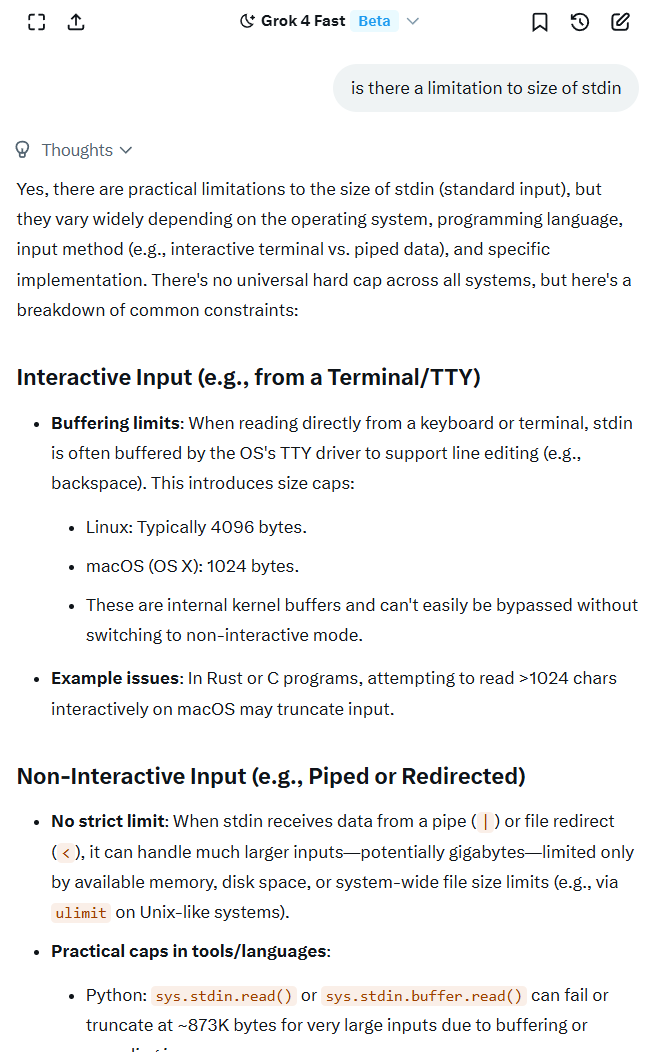

- is there a limitation to size of STDIN.

- yes, in interactive use in shell.

- no, when piped or done by a program.

- this is important For example when you code emacs lisp and want to call external command.

- you can do it via command line option of a file path, or pass file content via stdin.

- e.g. say you write a format-code command.

- Reading a file is a magnitude slower.

- full grok answer at

- https://x.com/i/grok/share/uTzwazML2Qu5hQluw87e0PJIB

- spectacular answer by grok ai on advanced Wolfram language question.

- in Wolfram language , What's the difference between value and definition of a symbol

- https://x.com/i/grok/share/B4SE8biJNpqcGnrfjamrltv0e

- What features makes a programing language slow, in a way that is impossible to write a fast compiler regardless of effort.

- I still do not understand.

- How is it possible that lua is faster than python?

- It turns out, because lua is a tiny language. Tiny lang don't have lots constructs, so it makes sense.

- How is it possible that julia is faster than fsharp?

- Julia is dynamic typed, fsharp is static typed.

- grok answers

- https://x.com/i/grok/share/R9Zn0fYwwV6lIiojteRQr0hfs

- but i don't fully understand.

minor updates and repost

- Python: Lambda

- The fate of reduce in Python 3000 (2005)

- Python, Lambda, Guido: is Language Design Just Solving Puzzles? (2006)

- The Fate of Lambda in Python 3000 and Scheme v300 (2010)

- Why Python Lambda is Broken and Cannot be Fixed (2013)

- Xah Talk Show 2021-05-28 Why Python Lambda is Broken and Can't be Fixed

- the worsing of spam, email, in 2006.

- in past 30 years, spam got increasingly worse, because the tech nerds, thought they gonna fight it with tech.

- you got the spy vs spy problem, aka tech escalation.

- one of the idiot in the early days, is paul graham, who became a spam idol for his heroic BAYESIAN spam filter, in 2002.

- how to fight spam n scam properly?

- it's not tech, it's human.

- like in the old days, if someone steal, off with his hands.

- not corps, not google, not facebook, not microsoft etc.

- always focus on the person. someone, did the wrong.

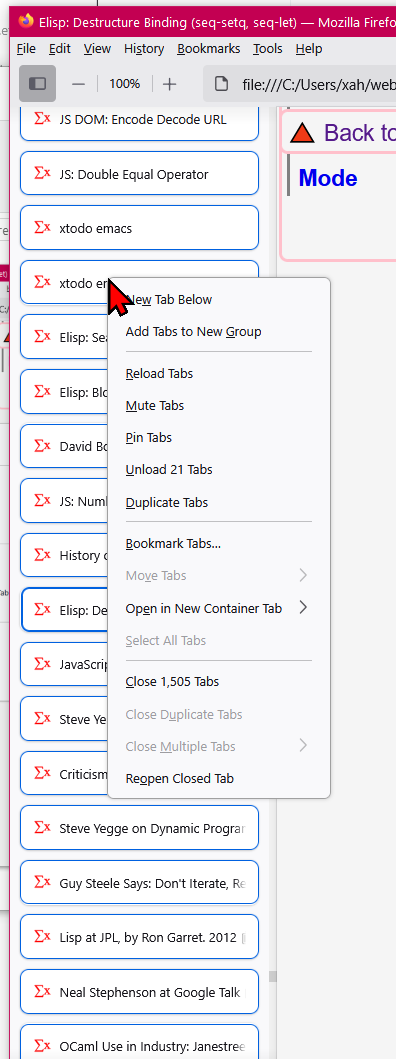

1505 tabs in Firefox, all xah lee site articles.

did massive renaming of article titles in past week, adding a year. so one can get a sense of what year the article is written, historical context.

perhaps a thousand done, maybe 5 thousands to go. lol.

this is for pages that are essay (opinions, blog, commentary, etc.). not suitable for tutorial pages, eg python tutorial, math subjects.

to get the count in firefox, you need to right click a tab, select all, and right click on the close, it'll show count. i asked grok ai.

firefox shoulda have count at top.

- ASCII Characters

- Unicode: ASCII Control Characters ␀

- Why You Need to Understand ASCII (2024)

- Programing Language: ASCII Char Jam vs Unicode (2011)

- Emacs Lisp Doc String Curly Quote Controversy (2015)

- Linux: Terminal Control Sequence Keys

- Stack Overflow offline page 2011-08-06

- Emacs: Newline Convention

- Emacs Key Notations Explained (/r, ^M, C-m, RET, <return>, M-, meta)

- The Measure of a Programing Language (2000)

- What is Expressiveness in Programing Languages (2005)

- What Languages to Hate (2002)

- Why I Hate the C Language (2012)

- Which Programing Language is Easier to Learn (2019)

- Which Programing Language is Best for Text Processing (2025)

- Computer Science Jargons Harms the Popularity of Programing Languages (2007)

- Programing Languages Popularity, 1997 to 2006

- Python Surpassed Perl In Popularity (2008)

- Programing Language Websites Popularity (2008)

- Programing Language: Will Lisp Ever be Popular (2008)

- What Programing Language Does Google Facebook Twitter Paypal Wikipedia Etc Use? (2011)

- Programing Language Popularity 2017

- Clojure's Popularity — a Tango with Java (2016)

- Why is Java Popular (2022)

- Why is Python Popular (2022)

- Why You Should Avoid the Jargon Tail Recursion (2002)

- JavaScript Tail Recursion Optimization (2023)

- Does Tail Recursion Optimization Necessitate Mutable Variable (Bob Martin) (2025)

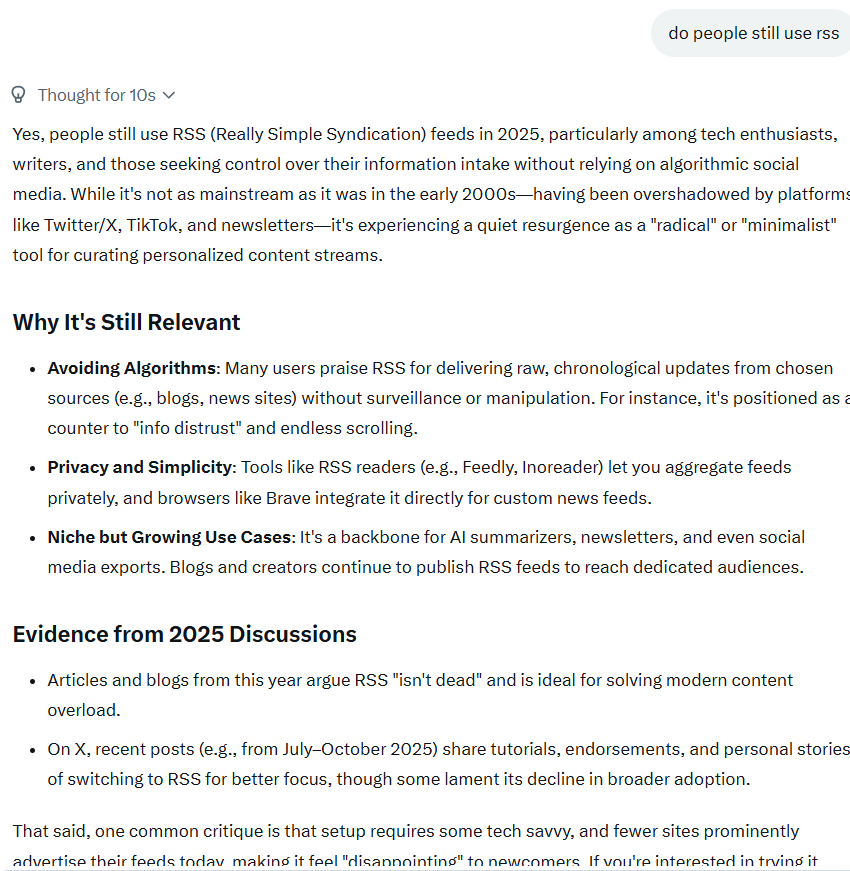

- do people still use RSS

- https://x.com/i/grok/share/NOOdVvPpd0xeHe2InzZDr3P8v

- Closure in haskell does not have state

- What is Closure in Programing Language (2012)

- computer science.

- parsing expression grammar (aka PEG), is the best thing.

- by the way if you don't know, regular expression by definition is to parse linear expression (cannot be used for nested syntax).

- by the way, emacs lisp now has PEG builtin. and am studying it, tutorial coming soon.

- and, we now have chatbot query porn.

- means, you just keep ask ai questions for several hours a day.

- makes you a phd in 4 years.

- any question, about coding, math, sciences, or your life questions, ask away.

- that is why it's called query porn.

- here's parts of my today query porn, on parsing expression grammar.

- https://x.com/i/grok/share/P64chm6cQu5e3kj3Wx4fZLnK9

updated

- how to write a vscode extension to have vim like keybinding

- https://x.com/i/grok/share/iaL2GUdjqsZtrboa1abEmBTHi

updated

- Python: Quote String

- Python: Triple Quote String

- Python: String Escape Sequence

- Python: Unicode Escape Sequence

- Python: String Prefix Character (u f r b)

- Python: Raw String (r-prefix)

- Python: f-String (Format, Template)

- Python: Print

- Python: Print Without Newline

- Python: Convert to String (repr, str)

- Python: Format String (Convert to String)

- comp lang: is the Map Function Functional Programing in Syntax or Semantics

- comp lang: Should Map Function Specify Order

- What is Function, What is Operator. (2010)

- Programing Language: Why You Need Operators

- Programing Language: What is the Definition of Operator

- Programing Language: Necessity of Operator Overload

- Programing Language: Unicode math symbols in function name, variable, operator

- Logical Operators, Truth Table, Unicode (2010)

- which language can define custom operators using unicode characters

- https://x.com/i/grok/share/zSH6rV94PDS6rW0paDRW0amPx

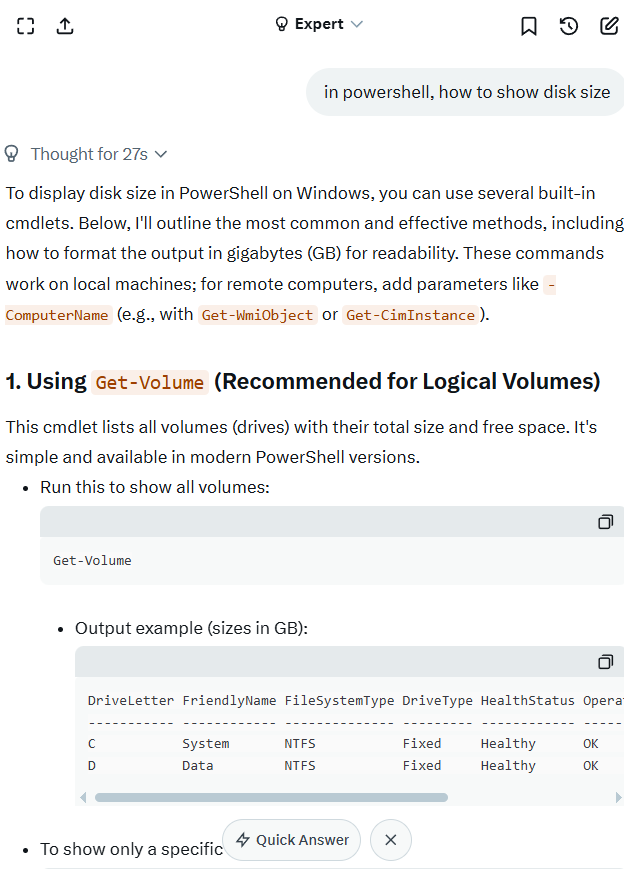

- in powershell, how to show disk size

- https://x.com/i/grok/share/hF6eCnFb37ZsPXBFUwNkxuxbR

- For coders. Interesting algorithm problem on text processing.

- Text processing, turns out is a big chapter in algorithm, though most coders don't realize it.

- Used in all text editors, all string functions, search string, regular expression regex.

- The data structure to store text itself is complex, and different text editors uses different structures.

- Link in thread.

updates

For Coders. Tech, Engineers, Emacs, Unicode History, and the Remnant of Elitism in Printing Press Establishments.

Unicode math symbols. Back in 2005. Lots problems.

Today, the problem is solved. Most code fonts have hundreds of unicode math symbols such as arrow →. Also, font substitution tech in operating system is mature. It'll find the char in another font. Also, in emacs, you can specify a dedicated font for symbol, emoji, or chinese.

this solves my need.

By the way i think fonts are european idiocy. I think they should all be banned. Just have one for serif, sans, and fixed-width. And ligature should be banned.

Also, there is particular elitism among font or typesetting circle. They are like a cult, and have particular jargons, e.g. Foundry. Am guessing, it's because hundreds years ago they are associated with the powerful establishment of the printing press, the publishing houses, writing (authors, writer's guild, style guides, the whole shebang), like what New York Times was in past decades.

- For Coders. Engineers, Computer Scientists.

- Advanced Wolfram Language (Mathematica).

- Wolfram Language Warts. issues to watch out.

- AI news. grok 4 is out if you guys haven't heard of it.

- Also, it has a new image generation engine or feature something like that .

- It can generate videos based on a static photograph.

- Also, there is a new AI companion a guy named Valentine.

- on the whole AI is amazing and tremendous.

- AI is changing human society in a way we don't know exactly what.

- also, I have seen demo that the new GROK AI can watch real life videos from your phone camera and tell you what it is seeing such as identifying a animal.

- This is truly incredible in human history.

- 🆕 Why Python Uses 0-Based Indexing? By Guido Van Rossum (2013)

- 🆕 Edsger Dijkstra on Array Index (1982)

- learn powershell in a day. list empty dir.

- PowerShell: list empty dir 📜

- learn linux commands in a week. list empty dir or files.

- Linux: Walk Dir. find, xargs

- For Tech Nerds.

- Python, one of the worst programing language, created by the also-ran and cocky rigid lowest-iq nerd guido von rossum.

- But it is pop, due to deep Google push.

- learn python in a week.

- Python Tutorial

- like and share.

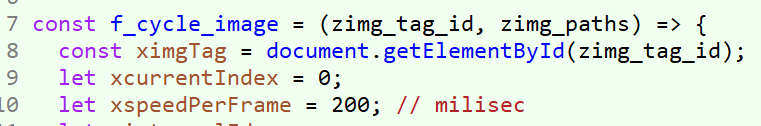

JavaScript toy, for beginning coders.

Mac: Show Hidden Files in Finder

⌘ command+Shift+.

print( list(( enumerate(["a","b","c"])))) # [(0, 'a'), (1, 'b'), (2, 'c')]

discovered that you can split windows on Microsoft Windows terminal.

- lol. what a joy.

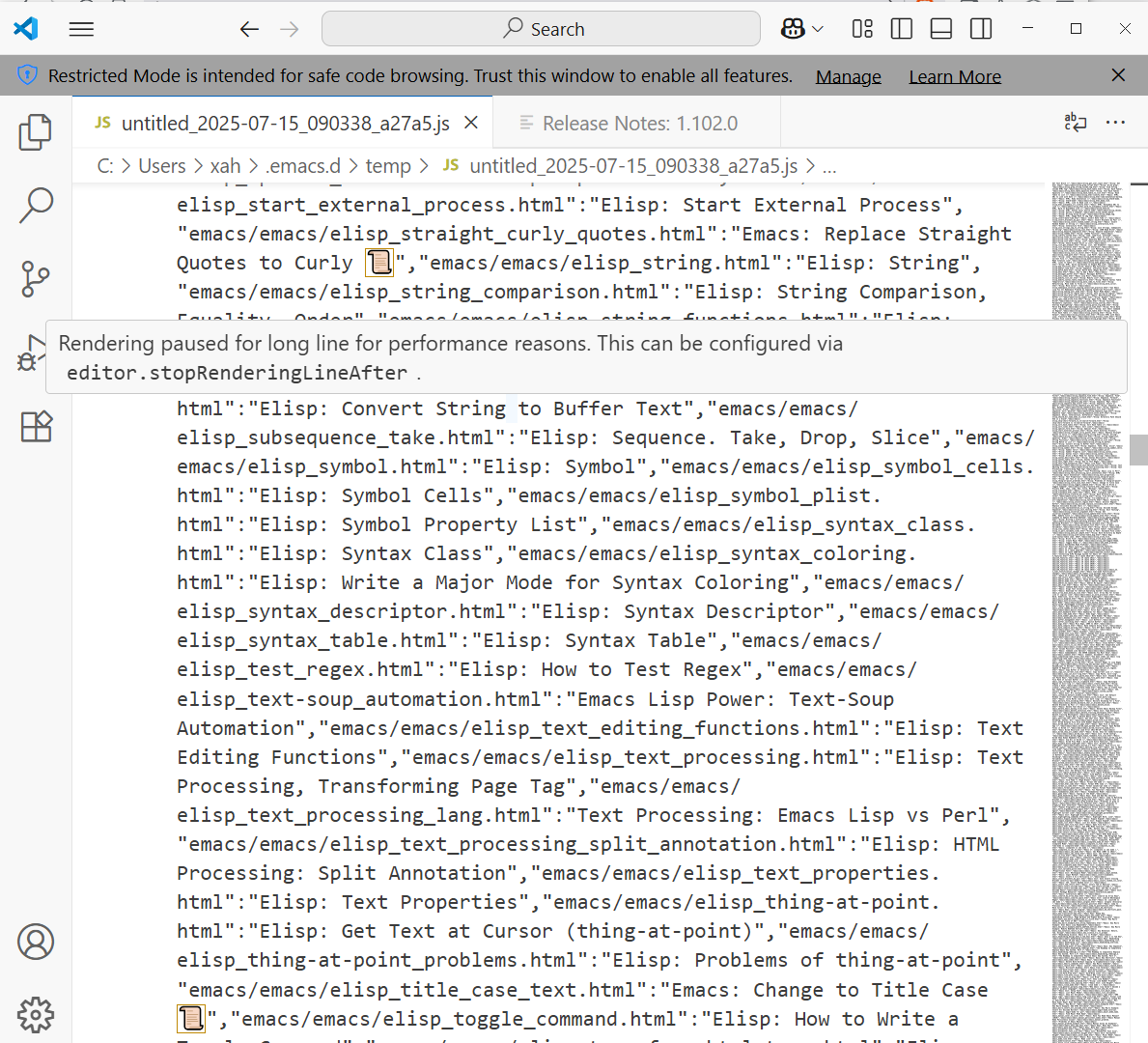

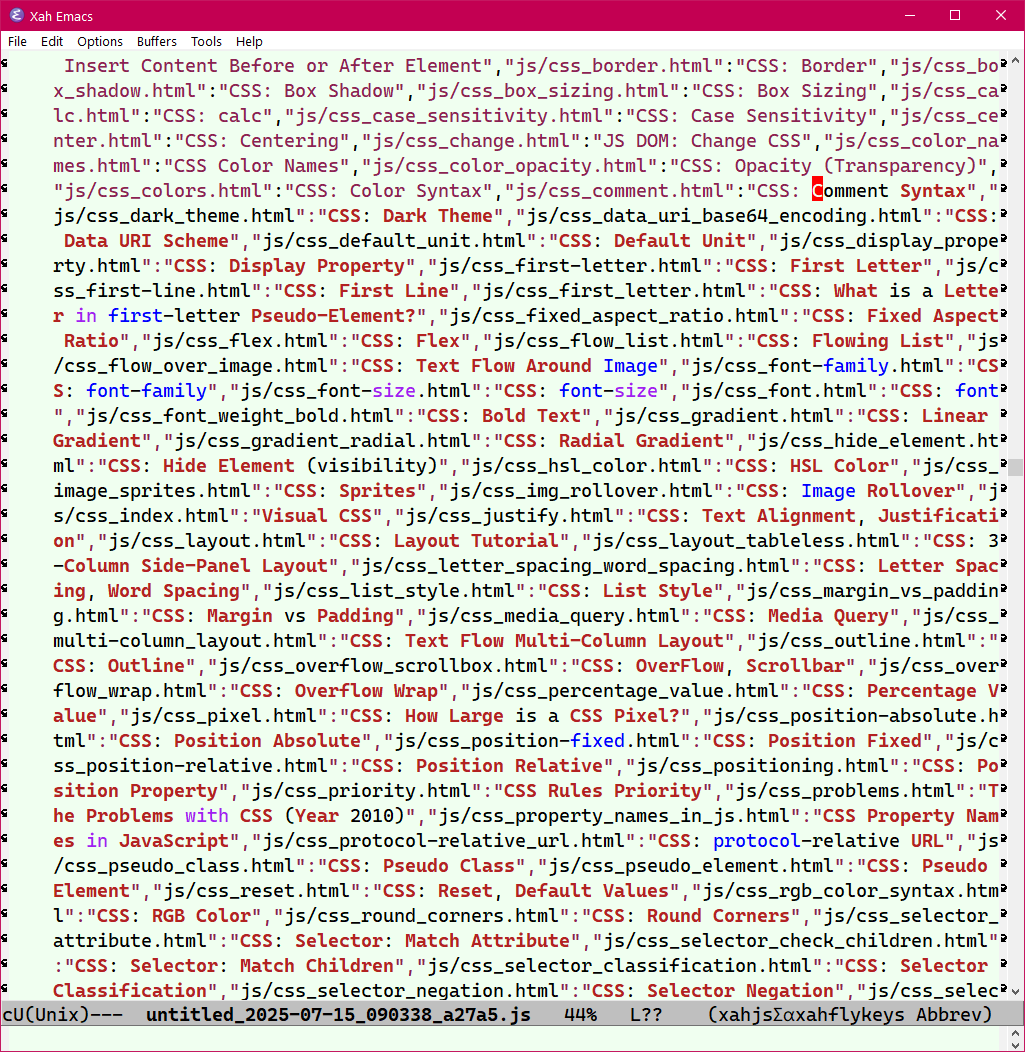

- vscode has the same problem emacs does, on long lines.

long ass lines. 551k chars.

- programing language design.

- philosophy of naming of functions

- https://youtu.be/Q5Bc7xEKdwQ?t=253

- PowerShell, Wolfram language, java.

- For coders. List Files (Walk Directory). Programing Language Comparison.

- Wolfram language is one single line.

- Bash and PowerShell is 1 line too, but less powerful.

- Python is 4 lines.

- Emacs lisp is 1 line, complicated.

- Golang is 20 lines, super complicated.

updated

- Do you miss being called the greatest troll.

- Richard Stallman did damage to emacs, Guido von Rossum idiot of python, and DHH skum of ruby on rails.

- https://youtu.be/INmTDdjkjdE?t=2594

- amazing.

- discovered toon shading.

- that is, cast shadow in a style of cartoon.

- technically, it is simplify shades of shadows into just a few colors.

- new in 2020, Wolfram language.

- For programers, scientists, engineers.

- Changing the name, from Wolfram Language in Depth to Wolfram Language for Programers.

- Hard choice.

- “in Depth” because the tutorial gets you to understand the symbolic term rewriting system. But Wolfram language is huge. Maybe “in Depth” is too tall a name.

- “for Programers” apply, because my tutorial is for industrial coders who are not familiar with scientific programing.

- In depth tutorial that covers how Wolfram language as symbolic term rewriting system is rare.

- In depth Tutorial that covers how Wolfram language as a programing language is also rare.

- (typically, Wolfram language tutorial or books cover it as a practical application to solve math equations and machine learning etc.)

- For engineers, programers, scientists.

- massive work in progress, wolfram language graphics system.

- it is like a CAD system, hundreds of functions on 3d graphics.

- rendering, mesh, light source, interactive 3d, and animation.

- Wolfram: Graphics Programing. Index

- Wolfram: Graphics Primitive

- Wolfram: Graphics, Graphics3D

- Wolfram: Graphics Directive

- Wolfram: Geometric Transformation Functions

- Wolfram: Apply Transform to Graphics

- Wolfram: Compose Transformations

- Wolfram: Translate Copy Graphics

- Wolfram: Transformation Matrix

- Wolfram: GraphicsComplex

- Wolfram: Mesh Region

- Wolfram: Extract Graphics from Plot Functions

- Wolfram: Graphics Misc

- Wolfram: Animation

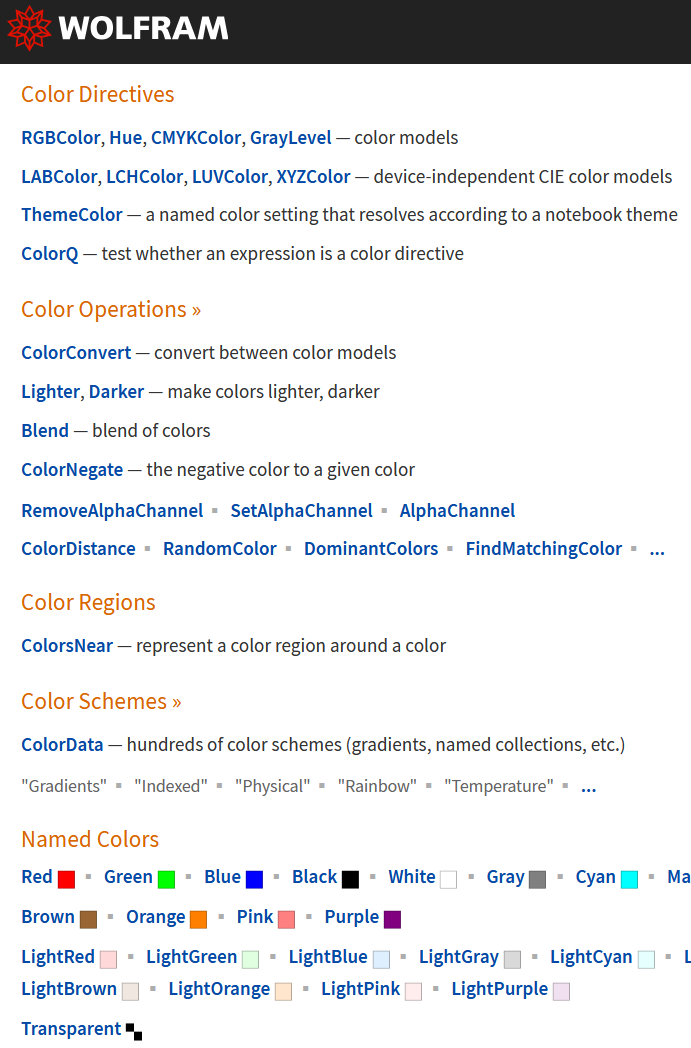

- For Coders.

- Wolfram language.

- All possible color model and scheme ever created.

- This is typical of Wolfram language.

- For every subject, math, machine learning, algo, 3d formats, image formats, sound formats, video formats, it supports basically all possible standards and schemes.

- By studying them in depth, you actually get an overview of human knowledge of the subject.

- It's not just learning a specific language.

- PowerShell append file

- https://x.com/i/grok/share/FOldXk8SYmwZIJifNoClaXM1H

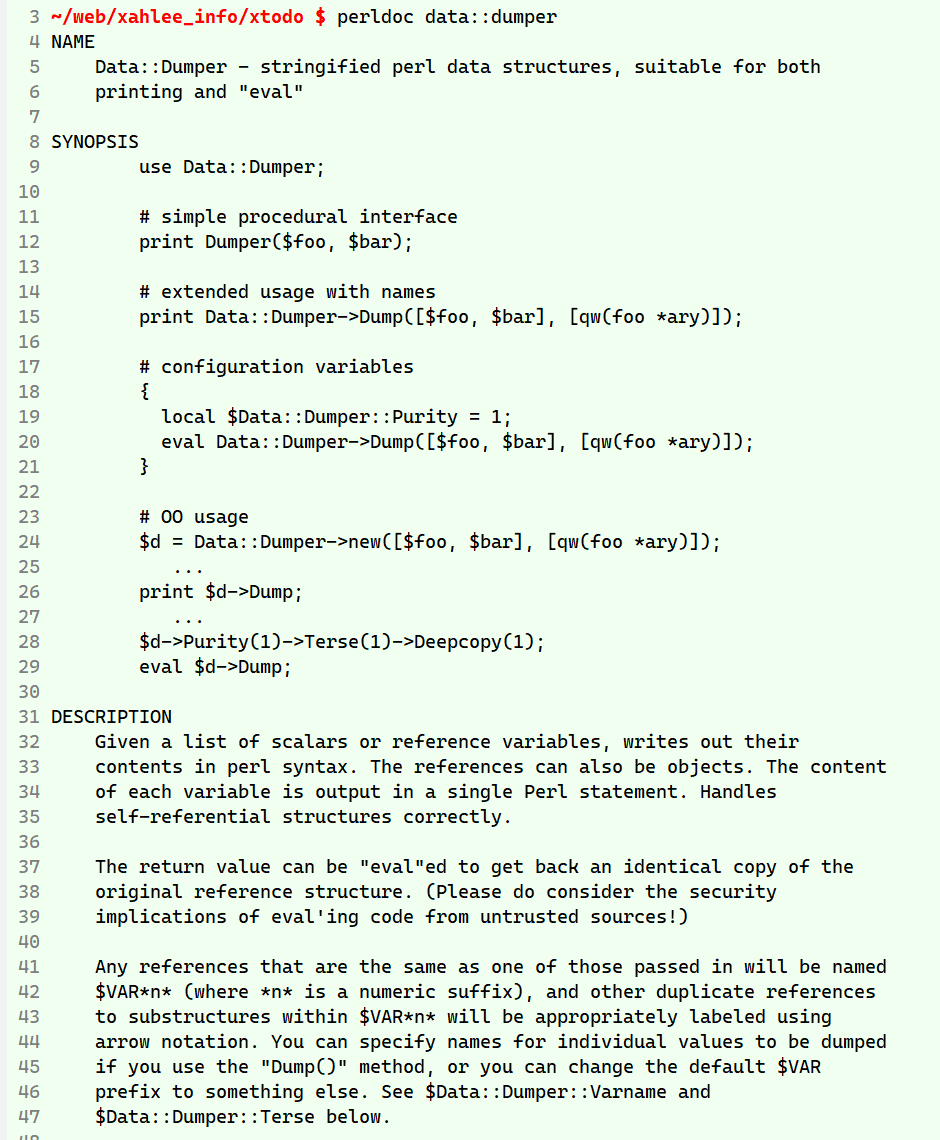

- For Coders, Hackers. Programing Language History.

- Perl, is the prime example of programing language scam.

- For 10 years, about 1995 to 2005, it was considered the most powerful and the last scripting language for humanity.

- what a story it is, from most popular with a cult promoted by every programer, to not just unpopular but death.

- you can still see the extreme low quality and cult nature of perl in its doc.

- here's doc for printing array and hashtable.

- perldoc data::dumper

- for coders, hackers.

- perl version history.

- perl, is the prime example of programing language scam.

- for 10 years, about 1995 to 2005, it was considered the most powerful and the last scripting language for humanity.

- what a story it is, from most popular with a cult promoted by every programer, to not just unpopular but death.

minor updates

- PowerShell: Array

- PowerShell: Array Sub-Expression Operator, Collection to Array

- PowerShell: Array and Types

- PowerShell: Nested Array, Multi-Dimensional Array

- PowerShell: Array, Get Items

- PowerShell: Array, Set Item

- PowerShell: Test If Collection Contains a Value

- PowerShell: Join Array, Append

- PowerShell: Filter Array (Where-Object)

- PowerShell: Delete Array, Clear Array

- PowerShell: Array to String

- PowerShell: Array Methods

- PowerShell: Iterate Array

misc updates

Summary: Ugly programing languages, protocols, in computing industry, is gonna be with us, forever. Because, it is not a matter of excellence in technical design, but rather, money and corps and power.

If you want better software tech, you need to get rid of corruption in society first.

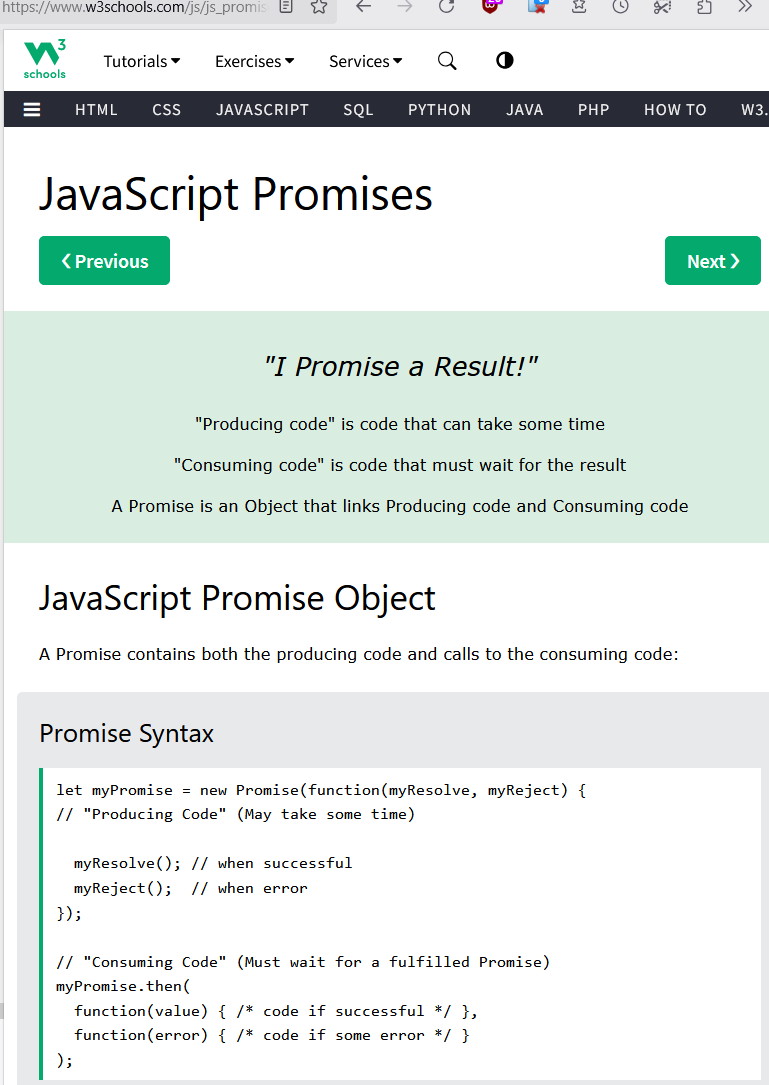

- humor for hackers.

- the most idiotic zoomer drivel on js promise.

- for coders, scientists.

- In Wolfram language, here's how to load a file.

-

note, Wolfram

Getis analogous to emacs lispload. -

note, Wolfram

Needsis analogous to emacs lisprequire.

perl cult

- Perl Books Survey 2002

- Perl One-Liner Screw (2000)

- Perl: the Difference Between Theory and Practice (2000)

- Perl: the Theory vs Practice Quip (2002)

- Perl, Laziness and Larry Wall (2002)

- Perl Larry Wall and Cults (2000)

- Perl's raison d'être (2000)

- On the Survival Strategies of Larry Wall vs Richard Stallman (2002)

- Emacs Lisp vs Perl for Text Processing (2007)

now full Table of Contents.

Bend Language

ollama. ai bot install

- ollama. very nice.

- thx to frenzie.

- gonna install deepseek and flux soon, livestream it.

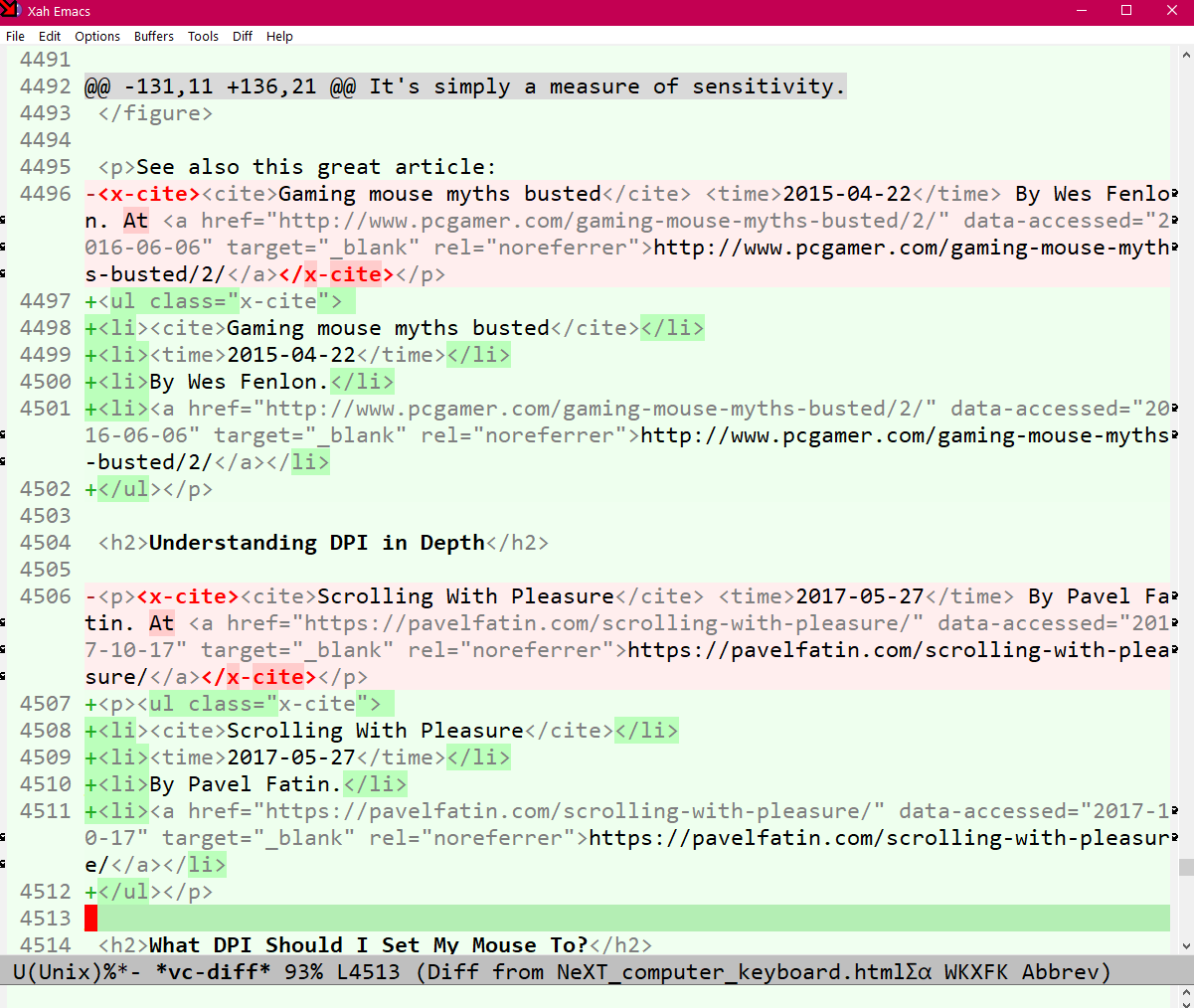

xah lee web, ten thousand files, tag for tag

- ten thousand html files.

- written manually, tag for tag, char for char.

- since 1997.

- https://x.com/xah_lee/status/1914825678115234225

- btw, the change is this.

- from:

<meta name=viewport … />- to:

<meta name="viewport" … />- a nasty thing Apple introduced, late milen dolts bought.

- for programing language designers.

- it is interesting to compare the string quote syntax design of different languages

- here's a short annotation.

- emacs lisp string. most primitive.

- only one form of string "abc".

- everything needs backslash.

- no embed variable.

- Elisp: String

- Wolfram language string, follows lisp tradition. only one form.

- Wolfram: String

- Perl String has many forms.

- 'single' is verbatim.

- "double" can embed variable, and has backslash escape.

- but it also have q, qq, with almost any character as delimiter.

- and finally it has heredoc, using random char sequence as delimiter, to allow quoting any text sans worry.

- Perl: Quote Strings

- PHP follows perl design.

- PHP: String Syntax

- Ruby follows perl design.

- Ruby: Quote String

- PowerShell follows basic perl design.

- 'single' is verbatim.

- "double" has escape and embed variable. but escape uses backtick instead backslash.

- PowerShell: String

- Golang String.

- "double quote" has backslash escape. no embed variable.

- `backtick quote` is verbatim.

- Golang: String

- JavaScript String is the worst.

- "QUOTATION MARK" → literal newline char not allowed. has backslash escape.

- 'APOSTROPHE quote' → basically same as QUOTATION MARK quote.

- after 20 years, in 2015, they fixed by adding

- `GRAVE ACCENT quote`.

- JS: Quote String

- Python String is a confuse ball.

- you got "double" , 'single', which means the same. does not allow multiple lines.

- then you got """triple double""", '''triple single''', which can have multiple lines.

- no here doc. no embed variable.

- then you got a bunch of string prefx chars, u for unicode, b for byte, r for raw, and f is added few years ago for format. these prefix can be combined.

- Python: Quote String

- Fsharp String.

- "quotation mark quote", has backslash escape.

- """triple quotation mark quote""", is verbatim.

- at sign @ prefix makes it verbatim.

- dollar sign $ prefix makes embed variable or expression.

- Fsharp: String

- the reason am learning fsharp is that:

- how immutable list, hashtable, etc works for real-life software needs. (e.g. the O in OCAML)

- i still no understand how oop works in fp,

- learn pattern matching in a f lang. how it compared to Wolfram language. (seems, no comparison at all. they are a magnitude lesser then patterns in Wolfram)

massive update

- PowerShell: APOSTROPHE Quoted String (verbatim)

- PowerShell: QUOTATION MARK Quoted String (Expandable)

- PowerShell: Regular Expression Operators

- PowerShell: Regular Expression Operators

- 🆕 PowerShell: Regex Result ($Matches)

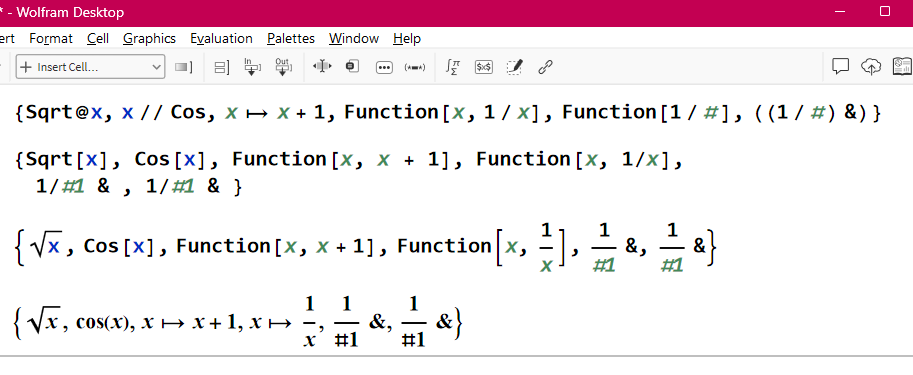

Wolfram language, turning an expression to function.

this is is a technique, of turning an expression to function.

useful because usually math functions are expressed as an expression.

For example, to plot a parabola, you write

Plot[{x, x^2}, {x,-2,2}]

instead of

Plot[{ Function[x, x], Function[x, x^2]}, {-2,2}]

however, formally, the formula for parametric plane curves are two functions. so

{ Function[x, x], Function[x, x^2]}

is actually more correct.

more traditionally written as

x = Function[x, x] y = Function[x, x^2]

but traditional math notation does not have a way to represent a anonymous function.

so traditionally its written as:

x = fx y = fy fx(x) = x fy(x) = x^2

in traditional math notation, function definition is usually written as an expression, with indication of which symbol is the function's formal parameter.

Clear[ ff ] ff[xxpr_, {xvar_, tmin_, tmax_}] := Function[xvar, xxpr]

topic or related

fuck the fsharp, got the indentation disease from python idiots.

massive. the importance of syntax.

Syntax is the most important aspect of a programing language

The quality of a programing language, can be judged by how much of it can be explained by its syntax alone.

- Why Syntax is More Important Than Semantics (2020)

- Formal Definition of Systematic Grammar (2016)

- Grammar Complexity of Conlang and Complang

- Composable Syntax (2016)

- Syntax Algebra (2016)

- Syntactic Meaning of Variable (2018)

- OCaml Syntax Sucks (2016)

- Problem of Expressive Programing Languages (2021)

- Variable Naming. English Words Considered Harmful

- Function Parameter names start with phi φ, variable names start with xi ξ (2014)

- The Sigil War, Syntactic Indicator for Types of Function and Variable (2016)

- Emacs Lisp Coding Style. DOLLAR SIGN $ and AT SIGN @ in Variable Names

- Jargon: Predicate in Programing Languages (2014)

- Perl: Variable Name Prefix (aka Sigil)

- Ruby: Variable Name Conventions

- PowerShell: Automatic Variables

- Clojure: Variable Name Conventions (sigil. macro magic characters.)

- best programing language are:

- golang → industrial, general, jobs

- fsharp → industrial, for functional programing

- Wolfram language → most powerful

- emacs lisp → most powerful for text processing

- worst shit are:

- c → bit diddling

- cpp → bit diddling with warts

- d → failed wart

- you have no choice langs:

- python → due to mob

- JavaScript → basically only choice on web

- java → boring enterprise jobs

- PowerShell → must on Microsoft

- bash → must for linux admin, scripts

- cpp → gamer dolts

- mid brain langs:

- rust → bit diddling with piss

- python → brainless zoomer jobs

- perl → for slobs

- haskell → understand nothing but holier than thou

- scheme lisp → going nowhere anytime

- common lisp → obsolete fart

- clojure → elegance intertwined with java shit

- finally, found the author

- UNIX Philosophy, Fast Food the UNIX way. 1983

- written in 1983.

- thanks to, someone on twitter (see my replies)

- unix, the crime of humanity

- brainwashed a few gen of coders

- and hogged the progress of cpu for 3 decades

PowerShell Firewall vs Unix Shit

- studying windows firewall in powershell.

- very complex, but very well documented.

- in contrast, on linux bsd, is one fucked up fuck.

- i spend a month studying linux firewall and document it in 2013.

- this is, the best iptable documentation.

- Linux: Firewall, iptables Tutorial

- powershell, is ten times better than the unix linux bash fuck.

- actually consistant, useful, syntax summary.

- fuck, the C and unix fuckheads, that damaged computing industry for 3 decades.

A Short History of Open Source Movement

- open source origin is fsf, the free software foundation, in 80s, by richard stallman.

- in around 1998, it got robbed by esr (Eric S Rayman and other) to form a faction called open source, on the pretext that open source is more friendly to commercial corps.

- the 2 camp r enemies, to this day.

- (but most people since 2010 have no idea, thinking they are same.)

- richard stallman gets very angry and batshit if you by mistake thank him for “open source” movement.

- by the way, it's on YouTube, but these days you can't find it become google is rotten.

- you can find it on my website...

- then, around 2010, open source became entirely taken over by deep usa controlled mega corps.

- today, basically 99.99% of open source, are funded, and controlled, by for profit giant corps, eg google facebook microsoft.

- thus code of conduct etc.

- the harm is too many to list.

- one of them is that it completely robbed social power of coders, which is the most critical profession for advancing a nation.

- now, either you work for a for money corp, have comfy living, conform to what they say, or you become jobless.

Stop The Open Source And Free Software Foundation Skam.

- If you are a open source activist, and you get your money from a company that's not 100% open source, you are a skum.

- Note: this applies to not average open source proponent.

- because like a lot pol movements (e.g. lgbtqtrans blm communism etc), lots youths don't know better.

- but applies to severe type, like activists, constantly promoting it.

- If you insist on this, you might want to unfollow me.

- I have been a fsf fanatic, in late 90s.

- Done lots livestream about it. Too many story to tell.

- But it's helpless in social network era, especially with the zoomer dolts.

- If you are really good man of peace and share mentality, simply put your code free.

- For legality, say it's public domain. That's it.

- You don't even need any license.

- Lots people especially in scientific community did this before fsf before open source, and i did it for all my Wolfram language and perl code in 90s.

China AI Bot Deepseek

- 1 tera usd gone, in a day

- amazing

- just because, china released a ai chatbot, open source n open weights.

- deepseek

What is this model?

- Deepseek R1 is a 671B mixture of experts model with "reasoning block" in inference where 37B params are activated on inference.

- It has 128K context length.

Is this model good?

- yes, but it's inferior to other top models in some aspects.

Is this model good for everything?

- no. it's not good for function calling, multi-turn conversations, json output and some other aspects.

What is interesting about this model?

- Deepseek claims that it tooks significantly less money to train than other top models. You can read the paper about their approach to training model.

- MIT licensed open weights. It's one of the largest and strongest models with open weights.

- It's one of the rare models that generates a "reasoning block" in response and trained specifically for it.

- my questions.

- what's “expert model”, “reasoning block”

- 37B params are active. not sure i understand this. what does it mean technically how some nodes are active?

- what's function calling?

- open weight. great idea.

- why did they release it as open weights?

- this seems critical.

- did china do that to basically just collapse openai chatgpt?

Programing Language Rant on C

- the only reason C exist today, is meme.

- C today, is a chad meme.

- its convoluted nature makes it the real man's lang.

- C always has air of real man's lang, like unix.

- Anything that's difficult to use, gets that air.

- so, u wanna be a real man? use C, unix.

- that was the saying, since 30 years ago.

- the style of saying that, changed a bit over the decade.

- c and cpp, both, are the worst skums in the universe

- began, with c

- and cpp, is just let's ride c and make me popular

- and they together, breeds the intel chips skam

- so for 30 or more years, we stuck with the intel 86 fuck

- fucked the entire computing industry for 4 decades

huge updates.

- Windows: Turn on File Sharing

- Mac: Access Shared Files on Windows, from Mac

- How to Share File: Access Windows Files from Mac, via Wifi

- Mac: Turn on File Sharing

On Open Source Tech, and Their Political Affiliations

- A lot coders told me they are against using fsharp csharp PowerShell, because they hate Bill Gates.

- But also, some have told me they are against golang, because Google is evil.

- Note, The whole dotnet, including csharp fsharp powershell, is opensource.

- Also, Rust, Golang, all google products, facebook, they went men can get pregnat or you get banned.

- for computer professionals.

- aka tech bro, who made america great with nuke n rockets n AI.

- not laywers and talking heads and stock friers.

- upgraded to powershell 7.5

- master go programing language.

- The best industrial programing language.

- Best functional programing language is fsharp.

- Best general purpose language is Wolfram language.

- worst langs are, c, cpp, python, JavaScript.

COMPUTER SCIENCE. What is Term-Rewriting System vs Regular Expression

- Wolfram language, as term-rewriting system.

- where you have pattern matching and replacement, similar to regular expression.

- but regular expression works on strings, while the term-rewriting system basically considers the source code as string (sequence of symbols forming terms aka expression).

- regular expression only matches basically linear expression.

- Wolfram language pattern can match nested expression, so it can get very complex on just which part of expression are replaced, tree depth levels and priority.

complete update on Wolfram language string functions.

- Wolfram: String

- Wolfram: String Functions

- Wolfram: Get SubString, by Index

- 🆕 Wolfram: Get SubString, by Pattern

- 🆕 Wolfram: String Split

- 🆕 Wolfram: String Join

- 🆕 Wolfram: String Insert

- 🆕 Wolfram: Delete SubString

- Wolfram: String Match

- Wolfram: String Replace

- Wolfram: Convert to String

- Wolfram: String Template

- Computer science.

- Programing language syntax design.

- A gander into Comment Syntax

- comp lang: Comment Syntax

On Wolfram Language Association (aka association list, dictionary, hash table). Major update. Now the association section is complete.

- Wolfram language's dict, you can filter, sort, etc, on the keys, or the values.

- Or do union intersection difference on keys, or on values.

- Or map to keys, or to values, or to the pair.

- Or do pattern matching on the keys, or on the values.

- A magnitude richer than python or haskell or clojure or any other language.

- And the key can be any expression, and the value can be any expression.

- Wolfram: Association (Key Value List)

- Wolfram: Association. Get Value

- Wolfram: Association. Add Item

- Wolfram: Association. Delete Items by Key

- Wolfram: Association. Check Key Exist

- Wolfram: Association. Get All Keys or Values

- Wolfram: Association. Delete Items by Filter

- 🆕 Wolfram: Create Association

- 🆕 Wolfram: Association. Check Value Exist

- 🆕 Wolfram: Association. Sort

- 🆕 Wolfram: Association. Union, Intersection, Complement, Etc

- 🆕 Wolfram: Association. Map Function

- 🆕 Wolfram: List. Same Items Counts, Tally, Group

- Wolfram: List. Partition, Reshape, Split, Gather

updated my php tutorial from 2007, just a tiny bit.